1 SATRAP TRP-SATRAP

Test result reports for the SATRAP CTI analysis platform.

1.1 TCER: modelling TRP-001

This test case execution result (TCER) reports the outcome of verifying modelling artifacts.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify linked requirements

- 0 = flawless: The data model of SATRAP-DL uses a data modelling language based on type theory, namely TypeQL.

- 0 = flawless: SATRAP-DL relies on a database paradigm that allows for knowledge representation based on semantics and PERA model implemented by TypeDB.

- 0 = flawless: SATRAP-DL supports querying the CTI SKB based on semantic criteria.

- 0 = flawless: The data model of the CTI SKB is extensible and allows for the integration of new information.

- 0 = flawless: The data model of the CTI SKB SHALL relies on a type-theoretic polymorphic entity-relation-attribute (PERA) data model to allow for the addition of new entities and relationships without requiring a schema migration.

Test case step 2: Check for alignment between system concept and implemented system

- 0 = flawless: alignment confirmed upon reviewing design artifacts and comparing these against the implementation.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

- N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-001 Verify data modelling artifacts

| Attribute | Value |

|---|---|

| test-date | 2025-03-25 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 2 |

| release-version | 0.1 |

| verification-method | R |

1.2 TCER: SW engineering TRP-002

This test case execution result (TCER) reports the outcome of the verification of naming convention usage and adherence to the SOLID software engineering principles.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify consistent naming convention use

- 1 = insignificant defect: based on a sample of the source files, most functions, classes and variables follow the PEP-8 naming convention consistently. Nevertheless, we did identify one problematic instance in the

log_utils.pymodule, see the comments section below for more details.

Test case step 2: Verify adherence to SOLID

- 0 = flawless: the 5 SOLID design principles are largely respected by the architectural modules.

Defect summary description

An insignificant defect was detected during test execution, i.e., thus assigning the overall highest defect category from the test step verdicts: 1 = insignificant defect

Please see the comments below for a few relevant observations.

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

The function definition testing(self, ...) in satrap/commons/log_utils.py: a function at the module level has a self parameter in its signature, as opposed to being used in instance methods within class definitions to refer to the instance of the class; see linked file for exact reference.

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect>

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

SOLID principles: Single responsibility principle (SRP), Open-closed principle (OCP), Liskov substitution principle (LSP), Interface integration principle (ISP), Dependency inversion principle (DIP).

satrap/commons/log_utils.py(line 69)

Parent links: TST-002 Verify software engineering practices

| Attribute | Value |

|---|---|

| test-date | 2025-03-25 |

| tester | AAT |

| defect-category | 1 = insignificant defect |

| passed-steps | 2 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | R |

1.3 TCER: STIX and reasoning TRP-003

This test case execution result (TCER) reports the outcome of STIX and reasoning engine usage verification.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify DBMS technology

- 0 = flawless: SATRAP-DL uses a DBMS technology that comes with a reasoning engine as a key integral part, namely TypeDB.

Test case step 2: Verify use of STIX 2.1

- 0 = flawless: SATRAP-DL uses STIX 2.1 as the default standard format for CTI representation.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-003 Verify STIX and reasoning engine

| Attribute | Value |

|---|---|

| test-date | 2025-03-25 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 2 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | R,I |

1.4 TCER: data model TRP-004

We analyze the SATRAP data model to verify adherence to that of STIX 2.1.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify data model alignment with STIX 2.1

- 1 = insignificant defect: support for ingesting STIX 2.1 is implemented, providing a direct mapping of the imported data to equivalent concepts in the TypeDB database; however, custom and metadata objects are currently missing.

Defect summary description

Assigned defect category: 1 = insignificant defect

STIX 2.1 is currently not complete (custom properties and meta objects currently not handled), but sufficient coverage is provided for the alpha release.

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-004 Verify STIX 2.1-based data model

| Attribute | Value |

|---|---|

| test-date | 2025-03-25 |

| tester | AAT |

| defect-category | 1 = insignificant defect |

| passed-steps | 1 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | A |

1.5 TCER: centralized management TRP-005

We report on our inspection to verify centralized management of system parameters customization via a dedicated configuration file, and of log storage, exception types and error messages.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

SATRAP-DL enables customization of system parameters via a YAML file located at satrap/assets/satrap_params.yml, capturing logging mode, TypeDB database parameters (host, port, db name) and ETL default source files/paths.

Test case step 1: Verify centralized system parameterization

- 0 = flawless: The user-controlled YAML file

satrap_params.ymlcaptures logging mode.

Test case step 2: Verify centralized parameterization for database connections

- 0 = flawless: The user-controlled YAML file

satrap_params.ymlcaptures TypeDB database parameters (host, port, db name)

Test case step 3: Verify centralized parameterization file for managing file paths

- 0 = flawless: The

settings.pyencapsulates paths to various resources used throughout the code.

Test case step 4: Verify designated logs storage location

- 0 = flawless: SATRAP-DL stores its logs under

satrap/assets/logs, with log files organized under subfolders named by date, which in turn contain timestamped logs files capturing the name of the log producing module.

Test case step 5: Verify centralized exception definitions

- 0 = flawless: Exceptions are defined in a centralized manner and stored in

satrap/commons/exceptions.py.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

satrap/assets/satrap_params.yml(line 3)satrap/settings.pysatrap/commons/exceptions.py

Parent links: TST-005 Verify centralized management

| Attribute | Value |

|---|---|

| test-date | 2025-03-26 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 5 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | I |

1.6 TCER: code clarity TRP-006

We report on our code inspections to validate the logging feature of the ETL subsystem.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify source code clarity according to the C5-DEC method and guidelines

- 0 = flawless: The majority of the SATRAP-DL code base exhibits a high degree of consistency in terms of understandability, readability and being well-documented.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-006 Verify code clarity

| Attribute | Value |

|---|---|

| test-date | 2025-03-26 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 1 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | I |

1.7 TCER: secure programming TRP-007

This test case execution report addresses the validation test case dealing with secure programming aspects and practices.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify components input validation

- 2 = minor defect: while most SATRAP components receiving input perform some form of validation, we have identified a few discrepancies such as those in the ETL

extractormodule. Thefetchfunctions in theDownloaderandSTIXExtractordo not validate the source URLs or file paths against a reference pattern or inclusion in any black or white lists. They do however perform syntactic checks directly and indirectly via thevalidate_file_accessfunction in thefile_utilsmodule.

Test case step 2: Verify sanitization of input and output of data passing across trust boundaries

- 0 = flawless: since integration with TIPs or other external sources residing outside the SATRAP trust boundary is currently not implemented as part of the alpha release (requirements for beta release), this sanitization requirements is considered to be satisfied.

Test case step 3: Verify resource liberation

- 0 = flawless: the ETL subsystem and various functions making I/O operations correctly release resources, e.g., database network connections and file streams handled via

withcontext managers.

Test case step 4: Verify SBOM usage

- 0 = flawless: all software dependencies of SATRAP, thanks to use its of Poetry, are listed precisely in an inventory providing a software bill of material (SBOM), that lists all used libraries, their respective versions, along with the corresponding hashes (automatically generated lock file).

Test case step 5: Verify log string sanitization

- 2 = minor defect: log strings are currently not sanitized and validated before being logging to prevent log injection attacks.

Log injection vulnerabilities can emerge when writing invalidated user input to log files can allow an attacker to forge log entries or inject malicious content into the logs; the data can enter an application from an untrusted source (N/A in the alpha release of SATRAP) or it can be written to an application or system log file. (applicable to the alpha release)

A note on log forging (source: OWASP)

In the most benign case, an attacker may be able to insert false entries into the log file by providing the application with input that includes appropriate characters. If the log file is processed automatically, the attacker can render the file unusable by corrupting the format of the file or injecting unexpected characters. A more subtle attack might involve skewing the log file statistics. Forged or otherwise, corrupted log files can be used to cover an attacker’s tracks or even to implicate another party in the commission of a malicious act.

Test case step 6: Verify secret storage

- 0 = flawless: Manual and automated scans (SAST) confirm the absence of logged or hardcoded sensitive information in the source code such as passwords or entity identifiers.

Test case step 7: Verify data semantic integrity enforcement

- 0 = flawless: the TypeDB engine, together with the SATRAP data model and TypeQL types (

cti-skb-types.tql) enforce semantic integrity ensuring that relationships and constraints adhere to the intended meaning. These enable benefitting from measures such as data validation with respect to schemas and relationship constraints, technical possibility of automated checks for data redundancy and inference powered by a reasoning engine.

Defect summary description

Various minor issues have been identified, thus assigning the overall highest defect category from the test step verdicts: 2 = minor defect

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

satrap/etl/extract/extractor.py(line 57)satrap/etl/extract/extractor.py(line 111)satrap/commons/file_utils.py(line 33)satrap/commons/file_utils.py(line 78)satrap/assets/schema/cti-skb-types.tql

Parent links: TST-007 Verify secure programming

| Attribute | Value |

|---|---|

| test-date | 2025-03-26 |

| tester | AAT |

| defect-category | 2 = minor defect |

| passed-steps | 5 |

| failed-steps | 2 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | I,R |

1.8 TCER: MITRE ATT&CK ingestion TRP-008

This test case execution report covers the validation test case specification on the ingestion of the MITRE ATT&CK data set.

Relevant test environment and configuration details

- Software deviations: aligned with test case specification

- Hardware deviations: aligned with test case specification

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify the execution of the SATRAP setup CLI command

- 0 = flawless: the obtained result aligns with the expected outcome described in the linked validation test case specification.

Test case step 2: Verify the execution of the SATRAP etl CLI command

- 0 = flawless: the obtained result aligns with the expected outcome described in the linked validation test case specification.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-008 Test setup + MITRE ATT&CK ingestion

| Attribute | Value |

|---|---|

| test-date | 2025-03-27 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 2 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | T |

1.9 TCER: ETL architecture TRP-009

This test case execution report covers the ETL subsystem; see the linked files for precise references to the cited code modules and classes mentioned below.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify existence of an ETL subsystem

- 0 = flawless: The architecture and logic view diagrams depict the ETL component. More over, the artifacts linked to SRS-008 show dedicated conceptual and software diagrams concerning the design of the ETL subsystem. The ETL subsystem is implemented in the

satrap/etlpackage.

Test case step 2: Verify ETL orchestration component

- 0 = flawless: As part of its dedicated ETL subsystem, SATRAP provides the orchestration module

etlorchestrator.py, for managing the Extract, Transform, and Load process.

Test case step 3: Verify extractor

- 0 = flawless: the extraction components supporting the ingestion of datasets in STIX 2.1 are implemented in the

satrap/et/extractpackage. The core logic is implemented in theextractor.pymodule.

Test case step 4: Verify the module for transforming data into STIX 2.1

- 0 = flawless: As part of its dedicated ETL subsystem, SATRAP provides a module in charge of transforming (

transformer.py) the ingested STIX data into the representation language of the CTI SKB schema, namely TypeQL.

Test case step 5: Verify component for insertion into the CTI SKB

- 0 = flawless: the components supporting the load of ingested content into the SATRAP CTI SKB, powered by TypeDB, are implemented in the

satrap/et/loadpackage (loader.py).

Test case step 6: Verify data integration module for database operations and connections

- 0 = flawless: SATRAP provides a dedicated data management package containing various related modules, with one in particular (

typedbmanager.py) in charge of managing database operations and connections.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

satrap/etl/etlorchestrator.py(line 12)satrap/etl/extract/extractor.pysatrap/etl/transform/transformer.pysatrap/etl/load/loader.pysatrap/datamanagement/typedb/typedbmanager.py

Parent links: TST-009 Verify ETL architecture

| Attribute | Value |

|---|---|

| test-date | 2025-03-27 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 3 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | R |

1.10 TCER: CTI SKB inference TRP-010

This test case execution report covers the SATRAP automated reasoning and inference capabilities; see the linked files for precise references to the cited files.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify that SATRAP-DL implements inference rules for automated reasoning

- 0 = flawless: The SATRAP implementation provides a dedicated rules file (

cti-skb-rules.tql). By analyzing the CTI SKB inference rule definition file and CTI SKB type definitions files stored in thesatrap/assets/schema, we confirm the presence of the required artifacts enabling derivation of knowledge over existing relations in the CTI SKB powered by TypeDB.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

satrap/assets/schema/cti-skb-rules.tql

Parent links: TST-010 Verify CTI SKB inference rules

| Attribute | Value |

|---|---|

| test-date | 2025-03-27 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 1 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | A |

1.11 TCER: Jupyter Notebook frontend TRP-011

Relevant test environment and configuration details

- Software deviations: aligned with test case specification

- Hardware deviations: aligned with test case specification

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Open the source code of SATRAP-DL in Microsoft VS Code Studio using the Dev Containers extension

- 0 = flawless: The project loaded successfully, with a bash session being the entry point selected by default, giving access to the SATRAP GNU/Linux container.

Test case step 2: Navigate to the VS Code Studio terminal and activate Python environment

- 0 = flawless: The SATRAP container was successfully accessed, and the commands

poetry shellandpoetry installalso successfully run.

Test case step 3: Navigate to the folder satrap/frontend/quick_start.ipynb and activate kernel

- 0 = flawless: Jupyter Notebook located successfully and kernel activated.

Test case step 4: Run each cell from top to bottom in order

- 0 = flawless: All cells executed, and their outputs were successfully compared to the expected output reference file

quick_start-test-reference.ipynb.

Part 1 on "Starting with simple functions":

- 0 = flawless:

satrap = CTIanalysisToolbox(TYPEDB_SERVER_ADDRESS, DB_NAME) - 0 = flawless:

print(satrap.get_sdo_stats()) - 0 = flawless:

print(satrap.mitre_attack_techniques()) - 0 = flawless:

print(satrap.mitre_attack_mitigations()) - 0 = flawless: cell "Get information on a specific MITRE ATT&CK element (technique, group, software, etc.) using its MITRE ATT&CK id."

- 0 = flawless: cell "Get information about a STIX object using its STIX id."

- 0 = flawless: cell "Retrieve mitigations explicitly associated to a specific technique using its STIX id."

Part 2 on "CTI analysis through automated reasoning":

- 0 = flawless: cell "Get statistics on the usage of ATT&CK techniques by groups. The output of this function is the same as when running the command

satrap techniqueson the CLI." - 0 = flawless: cell

display(satrap.techniques_usage(infer=True)) - 0 = flawless: cell

techniques = satrap.techniques_used_by_group("G0025", infer=True) - 0 = flawless: cell

display(satrap.related_mitigations(group_name="BlackTech"))

Subsection "Explanation of inferred knowledge"

- 0 = flawless: 1st cell starting with

rel_explanation = satrap.explain_if_related_mitigation("G0098", "course-of-action--20a2baeb-98c2-4901-bad7-dc62d0a03dea"). - 0 = flawless: 2nd cell starting with

reason = satrap.explain_related_techniques("ZIRCONIUM", "T1059.006"). - 0 = flawless: last cell starting with

dg_explanation = satrap.explain_techniques_used_by_group("G0071", "Domain Groups").

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

The function signature t1 = satrap.get_attck_concept_info("T1027.001") contains a typo: attck -> attack

Functions are named inconsistently: there is a mix of verb-based (preferred as per C5-DEC conventions) and noun-based naming, e.g.,

satrap.get_sdo_stats()satrap.mitre_attack_techniques()satrap.mitigations_for_technique()satrap.get_attck_concept_info()satrap.search_stix_object()

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

docs/specs/TST/assets/quick_start-test-reference.ipynb

Parent links: TST-011 Test Jupyter notebook frontend

| Attribute | Value |

|---|---|

| test-date | 2025-03-27 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 4 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | T |

1.12 TCER: ETL logging TRP-012

We ran tests to validate the logging feature of the ETL subsystem.

Relevant test environment and configuration details

- Software deviations: aligned with test case specification

- Hardware deviations: aligned with test case specification

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify ETL logging by running ./satrap.sh etl to ingest the latest version of the MITRE ATT&CK Enterprise data set

0 = flawless: The generated log, i.e., test-evidence-log.txt, stored in the satrap/assets/logs folder was checked (stored under date folders and timestamped files according to ETL execution time) and the following items were validated:

- a log entry is generated at the beginning of the job, indicating the start time

- each log entry recording an event comes with a log level: DEBUG, INFO, WARNING, ERROR, CRITICAL

- there is at least a log entry for each ETL phase (i.e., extraction, transformation and loading) describing the status in terms of success/failure and some minimal hint or additional information explaining or complementing the execution status.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

docs/specs/TRP/assets/test-evidence-log.txt

Parent links: TST-012 Test ETL logging

| Attribute | Value |

|---|---|

| test-date | 2025-03-29 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 1 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | T |

1.13 TCER: CM settings TRP-013

We report on our inspection of the centralized settings file to verify that some of its content is read from a user-controller configuration management file allowing configuration management without requiring software rebuild.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Navigate to the settings.py

- 0 = flawless: The file

settings.pywas located atsatrap/settings.py.

Test case step 2: Navigate to the YAML configuration management

- 0 = flawless: The configuration management file

satrap_params.ymlexposed to the user was found atsatrap/assets/satrap_params.yml.

Test case step 3: Check that the YAML file is read into memory (e.g., in a Python dictionary).

- 0 = flawless: this was validated the

settings.pyfileread_yaml(SATRAP_PARAMS_FILE_PATH); see linked files section for the precise, automatically retrieved line number.

Test case step 4: Check that at least one settings parameter read into memory

- 0 = flawless: parameters in

settings.pyare populated from the in-memory copy of thesatrap_params.ymlfile, with an example given below

HOST = satrap_params_dict.get('typedb').get('host','typedb')

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

satrap/settings.py(line 34)

Parent links: TST-013 Inspect settings for CM

| Attribute | Value |

|---|---|

| test-date | 2025-03-28 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 4 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | I |

1.14 TCER: SATRAP CLI TRP-014

We report on our tests carried out using the SATRAP command line interface (CLI) to verify that it provides at least the commands specified in the software requirement specification (SRS) that the linked test case specification traces to.

Relevant test environment and configuration details

- Software deviations: aligned with test case specification

- Hardware deviations: aligned with test case specification

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Run ./satrap.sh rules

- 0 = flawless: obtained result consistent with the expected outcome specified in the linked test case.

Test case step 2: Run ./satrap.sh stats

- 0 = flawless: obtained result consistent with the expected outcome specified in the linked test case.

Test case step 3: Run ./satrap.sh techniques

- 0 = flawless: obtained result consistent with the expected outcome specified in the linked test case.

Test case step 4: Run ./satrap.sh mitigations

- 0 = flawless: obtained result consistent with the expected outcome specified in the linked test case.

Test case step 5: Run ./satrap.sh search campaign--0c259854-4044-4f6c-ac49-118d484b3e3b

- 0 = flawless: obtained result consistent with the expected outcome specified in the linked test case.

Test case step 6: Run ./satrap.sh info_mitre T1027.001

- 0 = flawless: obtained result consistent with the expected outcome specified in the linked test case.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-014 Test command line interface (CLI)

| Attribute | Value |

|---|---|

| test-date | 2025-03-28 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 6 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | T |

1.15 TCER: Open-source TIP adoption TRP-015

We reviewed the SATRAP design artifacts to validate its adoption of open-source TIPs by design, specifically MISP and OpenCTI.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify SATRAP adoption of open-source TIPs

- 0 = flawless: we confirmed upon reviewing the SATRAP system concept documents that it adopts both MISP and OpenCTI as TIPs.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-017 Verify open-source TIP integration

| Attribute | Value |

|---|---|

| test-date | 2025-03-28 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 1 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | R |

1.16 TCER: release + licensing TRP-016

We report on our inspection of SATRAP release and licensing model.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify public open-source release of SATRAP source code

- 0 = flawless: The entire source code of SATRAP was confirmed to be released on GitHub via a public repository, as per project agreements.

Test case step 2: Verify licenses of 3rd-party libraries

- 0 = flawless: SATRAP software library dependencies do not restrict the privileges granted by the license selected for SATRAP-DL.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-018 Verify release and licensing

| Attribute | Value |

|---|---|

| test-date | 2025-03-28 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 2 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | I |

1.17 TCER: SATRAP architecture TRP-017

This test case execution report verifies the existence of CTI analysis components in the architecture of SATRAP. See the linked files for precise references to the cited code modules and classes.

Relevant test environment and configuration details

- Software deviations: N/A

- Hardware deviations: N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify that the system includes a dedicated module or component encapsulating the analytic functions provided by SATRAP.

- 0 = flawless: The CTI analysis engine is depicted in the system architecture designs. More over, the implementation contains a

satrap/enginepackage which includes the classCTIEngine(incti_engine.py); this class encapsulates the core analytic functionality directly interacting with the database manager. Query statements and result structures are part of this package too.

Test case step 2: Verify that the system includes a dedicated module or component aimed at providing the end-user functionality to be serviced by the system.

- 0 = flawless: The architectural diagrams contain a service component providing analytical functions. This design is satisfied in the implementation by the

satrap/servicepackage, in particular via theCTIanalysisToolboxclass in thesatrap_analysis.pymodule.

Test case step 3: Verify that the frontend is designed to interact with the service layer of SATRAP.

- 0 = flawless: the

quick_start.ipynbJupyter notebook included in thesatrap/frontendpackage shows an example of how to use theCTIanalysisToolboxservice component in this frontend interface.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

satrap/engine/cti_engine.py(line 16)satrap/service/satrap_analysis.py(line 13)satrap/frontend/quick_start.ipynb

Parent links: TST-019 Verify layered architecture of SATRAP

| Attribute | Value |

|---|---|

| test-date | 2025-09-03 |

| tester | IVS |

| defect-category | 0 = flawless |

| passed-steps | 3 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | R,I |

1.18 TCER: Semantic data integrity TRP-018

Relevant test environment and configuration details

- Software deviations: aligned with test case specification, N/A

- Hardware deviations: aligned with test case specification, N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Verify that the system enforces semantic integrity of data

- 0 = flawless: According to the system concept documentation of SATRAP-DL, in particular based on the document

2B1C_REP_CyFORT-SATRAP-DL-DetailedStudy-CTI-SKB_v1.0, this requirement is covered by consistency and correctness checks performed automatically by TypeDB. This native validations include schema validation at creation time and data validation w.r.t. a database schema when inserting data into a database.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

N/A

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-020 Verify enforcement of semantic data integrity

| Attribute | Value |

|---|---|

| test-date | 2025-09-03 |

| tester | IVS |

| defect-category | 0 = flawless |

| passed-steps | 1 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.1 |

| verification-method | R,A |

2 DECIPHER TRP-DECIPHER

Test result reports for DECIPHER (Detection, Enrichment, Correlation, Incident, Playbook, Handling, Escalation and Recovery).

2.1 Test containerized deployment TRP-019

Relevant test environment and configuration details

- Software deviations: aligned with test case specification, N/A

- Hardware deviations: aligned with test case specification, N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

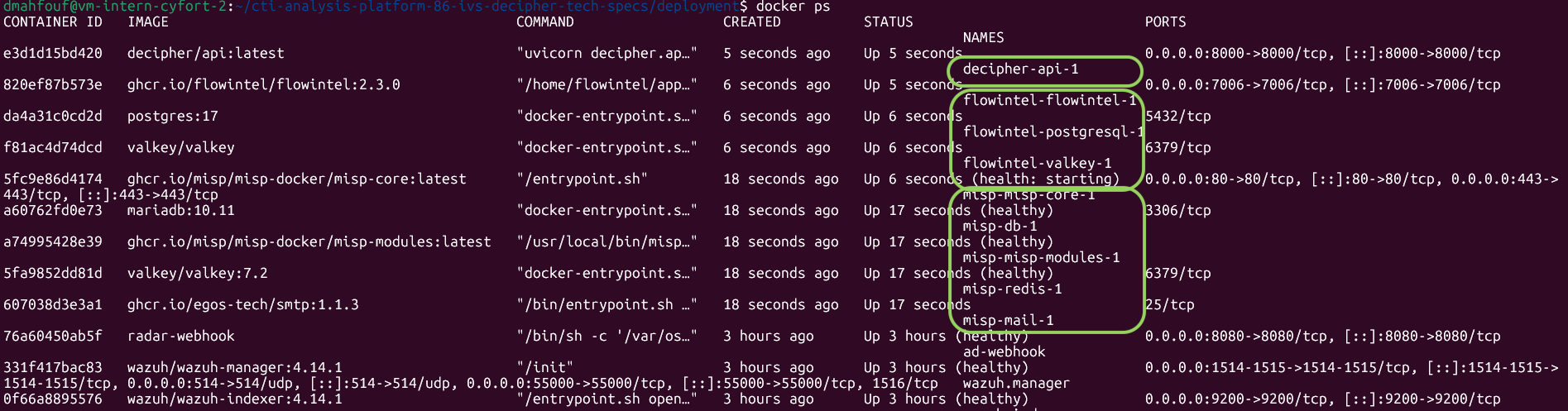

AC1, AC4: Full-stack startup

TC step 1: Verify that the deployment directory is available from the SATRAP-DL project root folder.

- 0 = flawless: The directory is available and accessible.

TC steps 2-5: Copy the env-template file to .env, configure the environment variables, and run ./decipher_up.sh --all; then verify running containers with docker ps.

- 0 = flawless: All containers (misp-misp-core-1, flowintel-flowintel-1, decipher-api-1) are running and healthy. The

.envfile was correctly configured to map the services.

TC step 6: Run docker volume ls and verify that there are three volumes prefixed with flowintel and two volumes prefixed with misp.

- 0 = flawless:

docker volume lsconfirms the presence of three volumes prefixed withflowinteland two withmisp.

TC step 7: Open a web browser and verify web services accessibility (MISP, Flowintel, DECIPHER API).

- 0 = flawless: MISP login page, Flowintel, and DECIPHER API are reachable at their respective ports (80, 7006, 8000).

AC2: Teardown with volume purge

TC steps 1-3: Run ./decipher_down.sh --all --purge, then verify with that all decipher stack containers and volumes are removed.

- 0 = flawless: After running

./decipher_down.sh --all --purge,docker ps -aanddocker volume lsconfirm that all containers and named volumes have been successfully removed.

AC3: Selective startup

TC steps 1-3: Run ./decipher_up.sh --api, execute the health-check curl request, and verify that the DECIPHER API response matches the expected output.

- 0 = flawless: The API started correctly and responds as:

{"status":"ok","service":"decipher-api","analyzers_loaded":1,"available_types":["suspicious_login"],"logging_level":"INFO"}

TC step 4: Run docker ps -a and verify that no MISP or Flowintel containers are present.

- 0 = flawless:

docker psconfirms that only decipher-api-1 is running; no MISP or Flowintel containers were created during the selective--apistartup.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-021 Test containerized deployment

| Attribute | Value |

|---|---|

| test-date | 2026-03-12 |

| tester | DMA |

| defect-category | 0 = flawless |

| passed-steps | 7 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.4 |

| verification-method | T |

2.2 Test DECIPHER REST service and API TRP-020

Relevant test environment and configuration details

- Software deviations: aligned with test case specification, N/A

- Hardware deviations: aligned with test case specification, N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Test case step 1: Send a POST request to the analysis endpoint with valid suspicious_login data

- 0 = flawless: The API returned a valid JSON including analyzed_scenario, severity, report, and created_case. The scoring engine successfully processed the two source IPs provided.

{"analyzed_scenario":"suspicious_login","severity":0.0,"report":{"log_summary":[],"misp_available":"True","misp_events_found":[],"score_breakdown":[]},"created_case":{"id":70,"link":"http://192.168.0.32:7006/case/70"}}

Test case step 2: Send a POST request to the incidents endpoint with a valid score

- 0 = flawless: The outcome contains an non-zero

IDfollowed by the link of the case created in flowintel.

{"id":71,"link":"http://192.168.0.32:7006/case/71"}

Test case step 3: Send a GET request to the analyzers endpoint

- 0 = flawless: The response correctly listed the registered alert types, including suspicious_login, along with their input schemas.

{"suspicious_login":{"description":"Analyzer for suspicious login attempts.","schema":{"description":"Schema for alerts related to suspicious login threat scenarios.\n\nAttributes:\n username: The username used in the login attempt.\n target_host: IP of the login target.\n src_ips: List of IP addresses, origin of the login attempt.\n timestamp: ISO 8601 timestamp of the alert.","properties":{"username":{"title":"Username","type":"string"},"target_host":{"title":"Target Host","type":"string"},"src_ips":{"items":{"type":"string"},"title":"Src Ips","type":"array"},"timestamp":{"title":"Timestamp","type":"string"}},"required":["username","target_host","src_ips","timestamp"],"title":"SuspiciousLoginAlert","type":"object"}}}

Test case step 4: Send a GET request to the health endpoint

- 0 = flawless: The response is a JSON body containing "status": "ok", analyzers_loaded (integer >= 1), and logging_level.

{"status":"ok","service":"decipher-api","analyzers_loaded":1,"available_types":["suspicious_login"],"logging_level":"INFO"}

Test case step 5: Send a GET request to the home endpoint (root /)

- 0 = flawless: The response is a JSON body containing the service version and documentation references (docs, redoc).

{"service":"DECIPHER Analysis API","version":"v0.1","docs":"/docs","redoc":"/redoc","health":"/health","analyzers":"/api/v0.1/analyzers"}

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

For the setup step, configure MISP and Flowintel instances in decipher-settings.yaml and start the API, the service was successfully started using ./decipher_up.sh --api.

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-022 Test DECIPHER REST service and API

| Attribute | Value |

|---|---|

| test-date | 2026-03-20 |

| tester | DMA |

| defect-category | 0 = flawless |

| passed-steps | 5 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.5 |

| verification-method | T |

2.3 Test analysis endpoint core behavior TRP-021

Relevant test environment and configuration details

- Software deviations: aligned with test case specification, N/A

- Hardware deviations: aligned with test case specification, N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

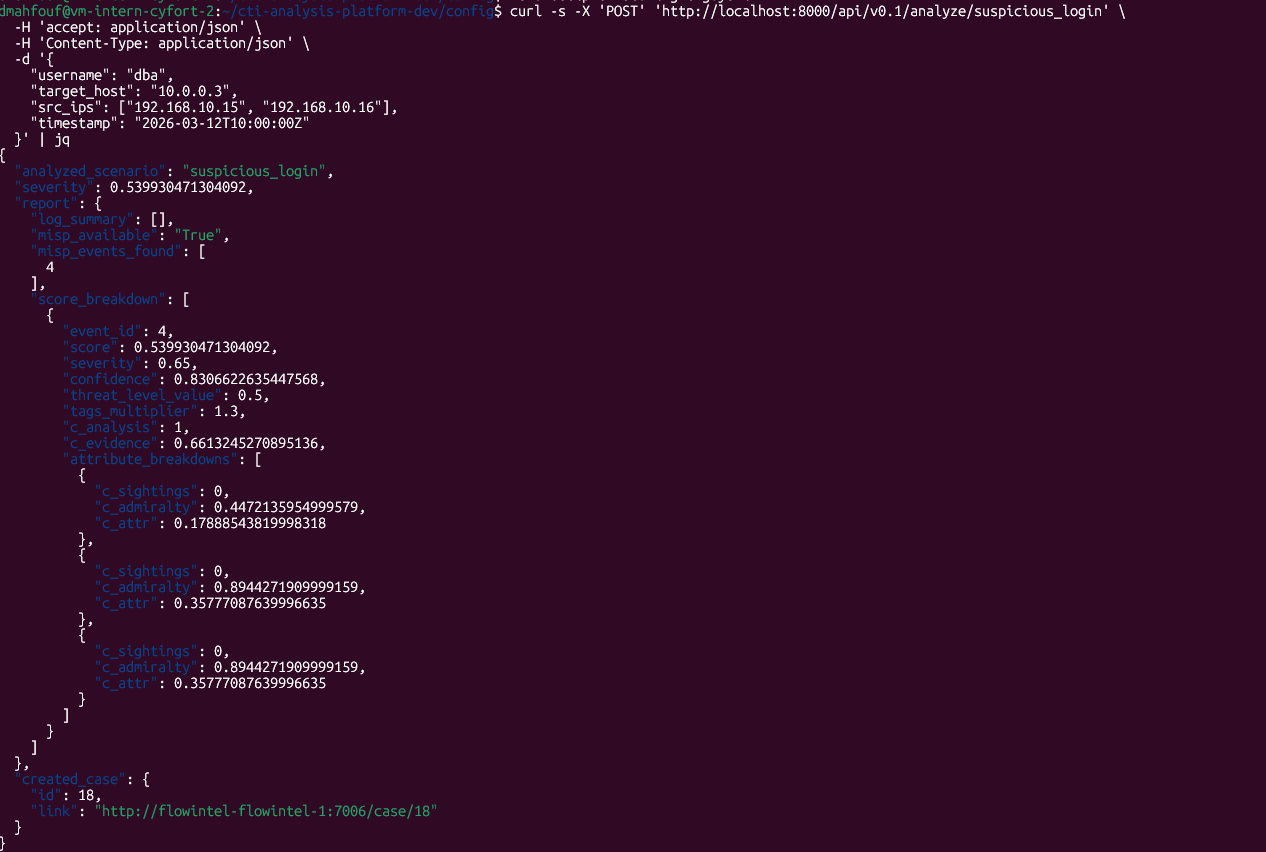

AC1: Successful analysis with valid data

TC step 1: Send a POST request to the suspicious_login analysis endpoint with valid alert data and verify the response.

- 0 = flawless: The output contains the string

HTTP 200and a JSON response with the fieldsanalyzed_scenario,severity,report, andcreated_case.

AC2: Analysis endpoint with invalid data

TC step 1: Send a POST request to the analysis endpoint with structurally invalid data (missing required fields) and verify the response.

- 0 = flawless: The output contains the string

HTTP 422and the response body details the validation error regarding missing or invalid fields.

AC3: Analysis endpoint with unregistered alert type

TC step 1: Send a POST request to the analysis endpoint with an unregistered alert type and verify the response.

- 0 = flawless: The output contains the string

HTTP 404and a message informing about the unknown alert type.

AC4: Internal analysis failure

TC steps 1-4: Corrupt config/decipher-runtime-cfg.yaml with invalid YAML content, send a POST request with valid alert data, and verify that the response contains HTTP 500; then restore the file.

- 0 = flawless: After corrupting the configuration file, the API successfully returned an

HTTP 500error as expected.

AC5: Case creation enabled

TC steps 1-2: Set enable_case_creation: true in config/decipher-runtime-cfg.yaml and send a POST request with valid alert data.

- 0 = flawless: Request completed successfully.

TC steps 3-4: Verify that created_case.id is non-zero and the case exists in Flowintel with all required description sections.

- 0 = flawless: The field

created_case.idis non-zero (Case ID 14) and the case exists in Flowintel. The case description contains all required sections (Scenario, Alert information, Severity score, Score breakdown, Additional information).

AC6: Case creation disabled

TC steps 1-5: Ensure enable_case_creation: false, send a valid POST request, then verify the HTTP 200 status, that created_case.id is 0, and that log_summary indicates case creation is disabled.

- 0 = flawless: The response status is

HTTP 200,created_case.idis 0, and thelog_summarycorrectly indicates that case creation is disabled.

AC7: MISP search disabled

TC steps 1-4: Set enable_misp_search: false, send a valid POST request, then verify the HTTP 200 status and that log_summary indicates MISP search is disabled.

- 0 = flawless: The response status is

HTTP 200and thelog_summarycorrectly indicates that MISP IOC search is disabled in the configuration.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-028 Test analysis endpoint core behavior

| Attribute | Value |

|---|---|

| test-date | 2026-03-12 |

| tester | DMA |

| defect-category | 0 = flawless |

| passed-steps | 7 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.4 |

| verification-method | T |

2.4 Test full workflow of analysis endpoint TRP-022

Relevant test environment and configuration details

- Software deviations: aligned with test case specification, N/A

- Hardware deviations: aligned with test case specification, N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

Analysis pipeline with matching event data

TC step 1: Send the POST request and verify the response fields (misp_events_found, severity, score_breakdown).

- 0 = flawless: The response from the API is successful (

HTTP 200). The fieldreport.misp_events_foundcontains two event IDs, theseverityis greater than 0, and thescore_breakdowncontains the expected objects and attribute breakdowns.

TC step 2: Verification of MISP event attributes matching.

- 0 = flawless: Manual verification in MISP confirms that the events found by DECIPHER contain the attributes corresponding to the IOCs sent in the request.

TC step 3: Warninglist filtering verification.

- 0 = flawless: The event "[TEST] Low: Regular user login from corporate network" was correctly excluded from the results as its attribute is part of the

cisco-umbrella-blockpage-ipv4warning list.

TC step 4: Flowintel case creation and content verification.

- 0 = flawless: The case was successfully created in Flowintel with a

priority-level:lowtag. The description is complete, including alert data, severity score, human-readable breakdown, and MISP search details.

Runtime settings for MISP search

TC steps 1-2: Runtime settings for MISP search - 5m window.

- 0 = flawless: After updating

event_timestampto5m, the API correctly returns an empty list formisp_events_foundand aseverityof 0, validating the temporal filtering.

TC step 3: Runtime settings for MISP search - 7d window.

- 0 = flawless: Restoring

event_timestampto7dallows the API to find the events again, confirming the configuration is updated dynamically.

Clean up

The temporary Python environment .venv-pymisp was successfully removed.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-023 Test full workflow of analysis endpoint

| Attribute | Value |

|---|---|

| test-date | 2026-03-12 |

| tester | DMA |

| defect-category | 0 = flawless |

| passed-steps | 7 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.4 |

| verification-method | T |

2.5 Test runtime-configurable DECIPHER features TRP-023

Relevant test environment and configuration details

- Software deviations: aligned with test case specification, N/A

- Hardware deviations: aligned with test case specification, N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

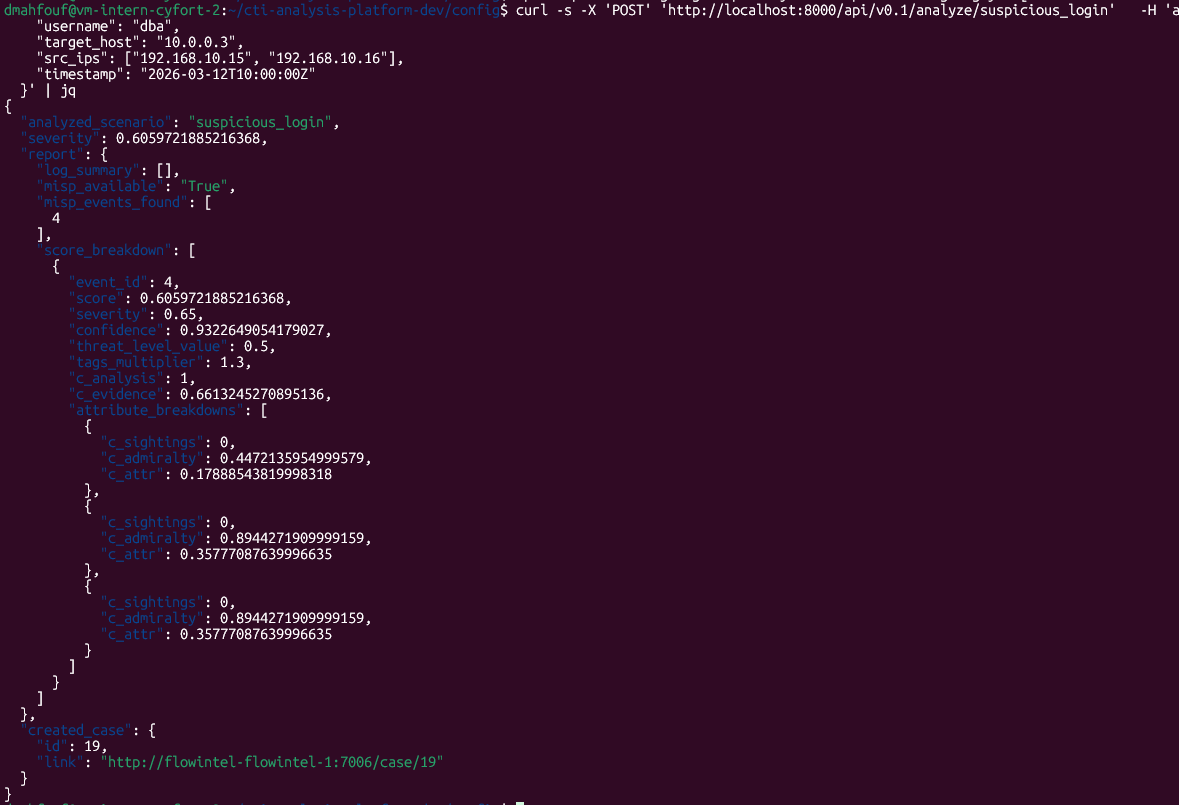

Test case step 1: Initial analysis with default thresholds

- 0 = flawless: The API returned a severity of 0.54. A case was successfully created in Flowintel with a priority-level:medium tag, as expected for this score.

Test case step 2-3: Modification of priority thresholds (high: 0.5)

- 0 = flawless: After changing the priority_thresholds in decipher-runtime-cfg.yaml, a new analysis request resulted in a case with a priority-level:high tag, confirming the threshold update was applied instantly.

Test case step 4-5: Runtime disabling of case creation

- 0 = flawless: Setting enable_case_creation: false in the configuration resulted in a response where created_case.id is 0 and the URL is empty.

Test case step 6-7: Update of scoring weights (0.8 analysis / 0.2 empirical)

- 0 = flawless: After updating decipher-scoring-cfg.yaml with the new weights, the returned severity increased to approximately 0.606.

Test case step 8: Restoration of configuration

The decipher-scoring-cfg.yaml and decipher-runtime-cfg.yaml files were restored to their original states.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-024 Test runtime-configurable DECIPHER features

| Attribute | Value |

|---|---|

| test-date | 2026-03-12 |

| tester | DMA |

| defect-category | 0 = flawless |

| passed-steps | 5 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.4 |

| verification-method | T |

2.6 Test analysis endpoint graceful degradation TRP-024

Relevant test environment and configuration details

- Software deviations: aligned with test case specification, N/A

- Hardware deviations: aligned with test case specification, N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

MISP service unavailability

TC steps 1-2: Bring MISP down with ./decipher_down.sh --misp and send a POST request with valid alert data.

- 0 = flawless: After bringing MISP down, the API correctly returned

HTTP 200. Theseverityremained greater than 0 (based on internal analysis), and thelog_summaryexplicitly mentioned a MISP enrichment failure due to an unreachable network.

TC step 3: Restart MISP and verify service recovery

- 0 = flawless: MISP was successfully restarted using

./decipher_up.sh --misp.

Flowintel service unavailability

TC steps 4-5: Bring Flowintel down with ./decipher_down.sh --flowintel and send a POST request with valid alert data.

- 0 = flawless: After bringing Flowintel down, the API request returned

HTTP 200. Theseveritywas correctly calculated, and thelog_summaryproperly reported the case creation failure.

TC step 6: Restart Flowintel and verify service recovery.

- 0 = flawless: Flowintel was brought back up using

./decipher_up.sh --flowintel.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-026 Test analysis endpoint graceful degradation

| Attribute | Value |

|---|---|

| test-date | 2026-03-12 |

| tester | DMA |

| defect-category | 0 = flawless |

| passed-steps | 4 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.4 |

| verification-method | T |

2.7 Test incidents endpoint TRP-025

Relevant test environment and configuration details

- Software deviations: aligned with test case specification, N/A

- Hardware deviations: aligned with test case specification, N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

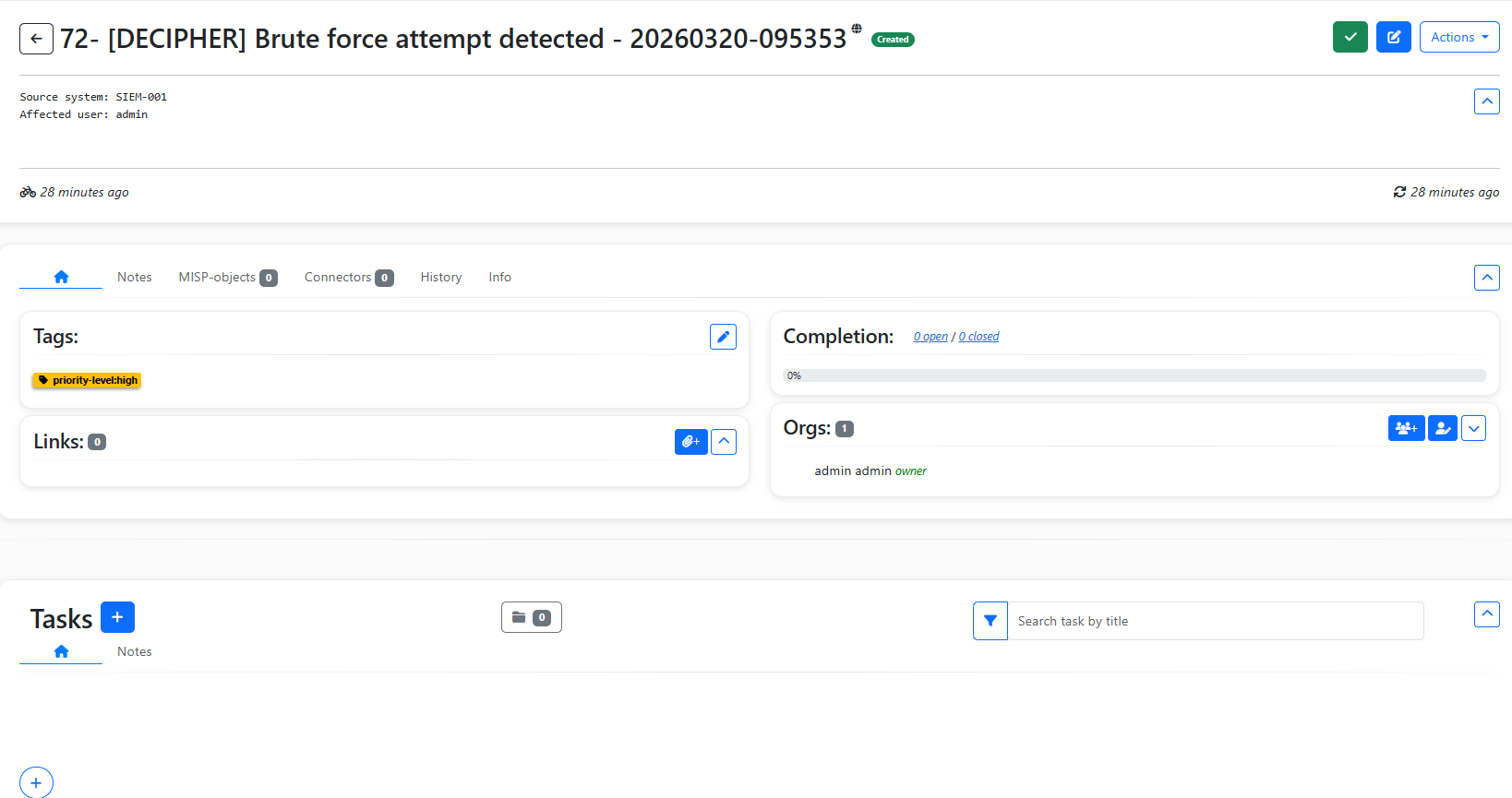

AC1: Valid incident creation

TC steps 1-5: Send a POST request with valid data, verify the HTTP 200 status, verify the non-zero id and link in the response body, access the case in Flowintel, and verify the title format.

- 0 = flawless: The output contains

HTTP 200. The response body provides a non-zeroidand a valid Flowintel link. In Flowintel, the case title is correctly prefixed with[DECIPHER]and suffixed with a timestamp.

json

{"id":72,"link":"http://192.168.0.32:7006/case/72"} HTTP 200

AC2: Invalid score rejection

TC steps 1-3: Send a POST request with a score outside the valid range (1.5), verify HTTP 422, and verify the validation error message references the score field.

- 0 = flawless: The output contains

HTTP 422. The response body correctly identifies the validation error on thescorefield.

json

{"detail":[{"type":"value_error","loc":["body","priority_level"],"msg":"Value error, Invalid MISP priority level 'unclassified'. Valid values: [baseline-minor, baseline-negligible, emergency, high, low, medium, priority-level:baseline-minor, priority-level:baseline-negligible, priority-level:emergency, priority-level:high, priority-level:low, priority-level:medium, priority-level:severe, severe]","input":"unclassified","ctx":{"error":{}}}]} HTTP 422

AC3: Priority tag matches requested priority level

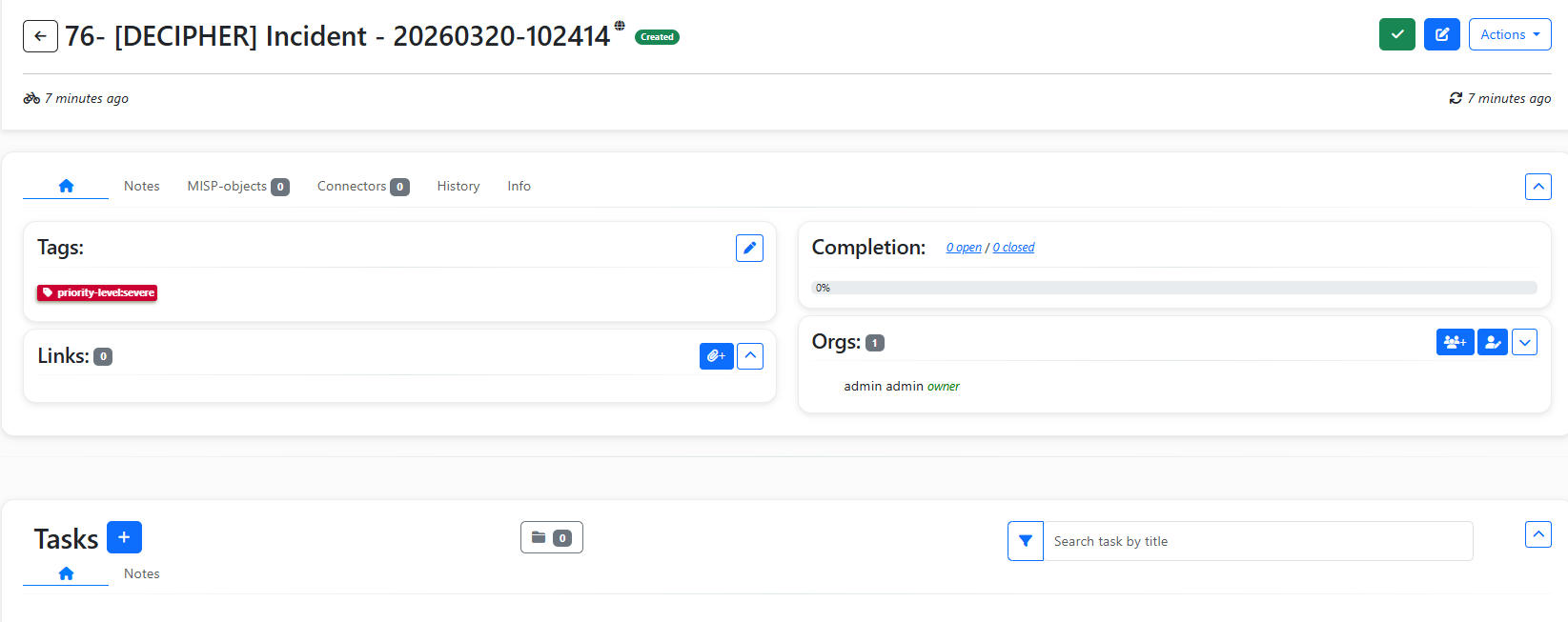

TC steps 1-3: Send a POST request with priority_level set to priority-level:severe.

- 0 = flawless: The output contains

HTTP 200, The created case is accessible by the link and the severity issevere.

json

{"id":76,"link":"http://192.168.0.32:7006/case/76"} HTTP 200

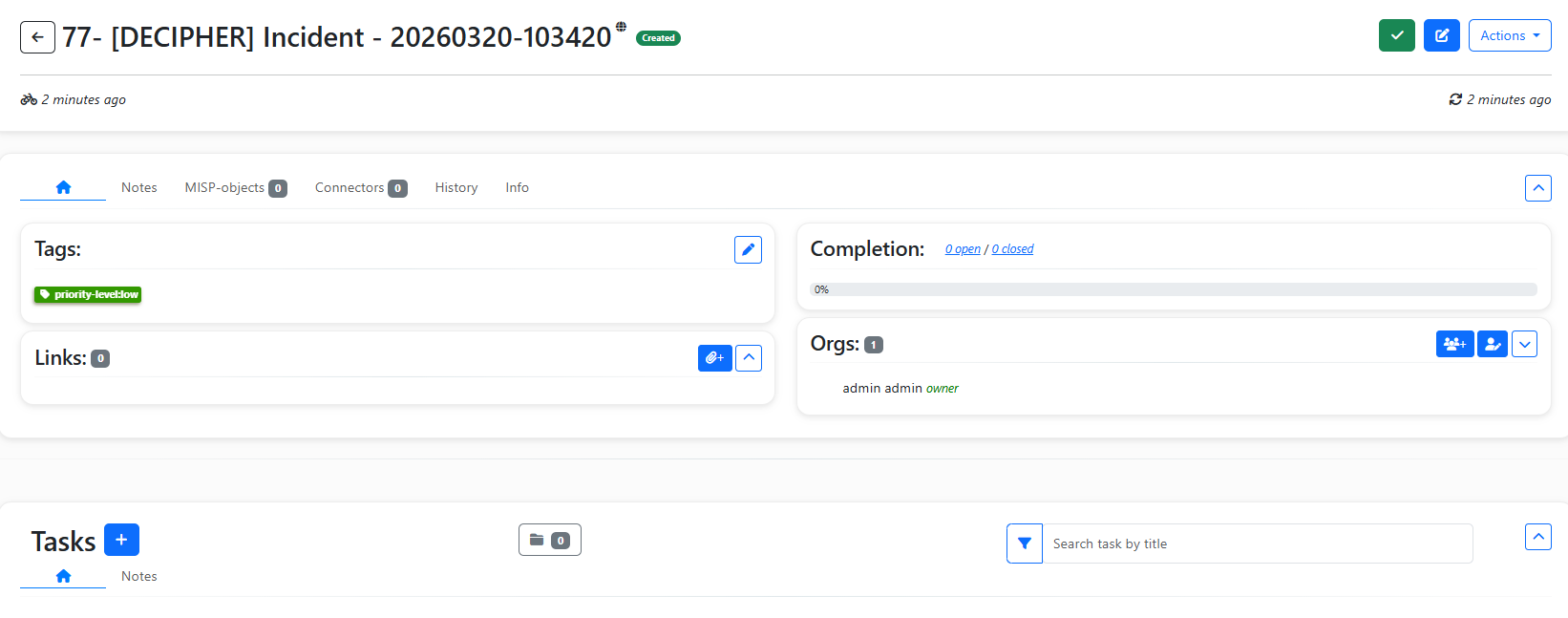

TC steps 4-6: Send a POST request with priority_level set to low.

- 0 = flawless: The output contains

HTTP 200, The created case is accessible by the link and the severity islow.

json

{"id":77,"link":"http://192.168.0.32:7006/case/77"} HTTP 200

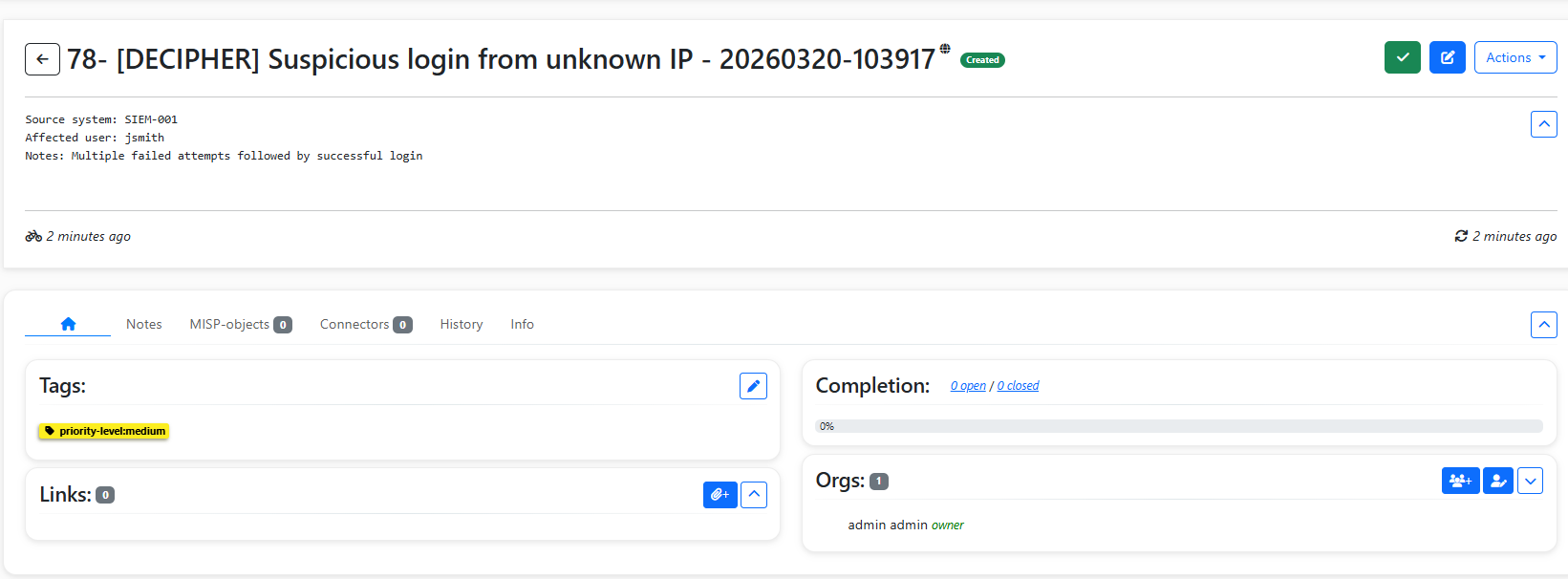

AC4: Case description

TC steps 1-3: Send a POST request with a description object containing additional key-value pairs.

- 0 = flawless: The output contains

HTTP 200, the created case is accessible by the link and the case description contains the fields provided in thedescriptionobject:source_system,affected_user, andnotes.

json

{"id":78,"link":"http://192.168.0.32:7006/case/78"} HTTP 200

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

Any additional informative details not fitting in the above sections.

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-025 Test incidents endpoint

| Attribute | Value |

|---|---|

| test-date | 2026-03-20 |

| tester | DMA |

| defect-category | 0 = flawless |

| passed-steps | 4 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.5 |

| verification-method | T |

2.8 TCER: extensible analyzer framework TRP-026

Relevant test environment and configuration details

- Software deviations: aligned with test case specification, N/A

- Hardware deviations: aligned with test case specification, N/A

Test execution results

Here we report the results in terms of step-wise alignments or deviations with respect to the expected outcome of the covered test case.

AC1: Decorator-based registration without API-layer modification

TC step 1: Open decipher/analyzers/registry.py and locate the AnalyzerRegistry class.

- 0 = flawless: Class successfully located.

TC step 2a: Verify that the class exposes a register class method intended for use as a decorator (@AnalyzerRegistry.register).

- 0 = flawless:

AnalyzerRegistry.registeris a@classmethodinregistry.py.

TC step 2b: Verify that the method stores the decorated class in the internal _analyzers dict keyed by alert_type, without any import or reference to decipher/api.py.

- 0 = flawless: The method stores the decorated class in

_analyzerskeyed byalert_type; no import ofapi.pyis present inregistry.py.

TC step 3: Open decipher/analyzers/suspicious_login.py and verify that the SuspiciousLoginAnalyzer class is decorated with @AnalyzerRegistry.register.

- 0 = flawless:

SuspiciousLoginAnalyzer(suspicious_login.py, line 47) is decorated with@AnalyzerRegistry.register.

TC step 4: Open decipher/analyzers/__init__.py and verify that adding a new analyzer module requires only a single import line in that file — no changes to decipher/api.py.

- 0 = flawless:

decipher/analyzers/__init__.pyregisters it via a single import line (from . import suspicious_login) with no modifications toapi.pyrequired.

AC2: Duplicate registration is rejected at startup

TC step 2: Verify that, before inserting a new entry, the method checks whether alert_type is already present in _analyzers.

- 0 = flawless:

register()checksif alert_type in cls._analyzersbefore inserting a new entry.

AC3: Error on duplicated analyzer identifier

TC step 1: Verify that a duplicate alert_type raises a ValueError with a message identifying the conflicting identifier (e.g. "Analyzer for '<type>' already registered").

- 0 = flawless: A duplicate

alert_typeraisesValueError("Analyzer for '<type>' already registered"), identifying the conflicting identifier.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See linked files (if any), e.g., screenshots, logs, etc.

Comments

Any additional informative details not fitting in the above sections.

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-027 Test extensible analyzer framework

| Attribute | Value |

|---|---|

| test-date | 2026-03-12 |

| tester | AAT |

| defect-category | 0 = flawless |

| passed-steps | 7 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 0.4 |

| verification-method | I |

3 SATRAP-DL general aspects TRP-SATRAP-DL

Test result reports concerning general aspects across the SATRAP-DL project, mostly related to non-functional requirements.

3.1 Test software identification with version TRP-027

Relevant test environment and configuration details

- Software deviations: aligned with test case specification

- Hardware deviations: N/A

Test execution results

Here we report the step-wise alignment or deviation with respect to the expected outcome of the covered test case.

Test case step 1: Verify that the SATRAP CLI has a command for retrieving the software name and version

- 0 = flawless: The command is available and returns the expected version string.

$ ./satrap.sh -V

SATRAP v1.0

$ ./satrap.sh --version

SATRAP v1.0

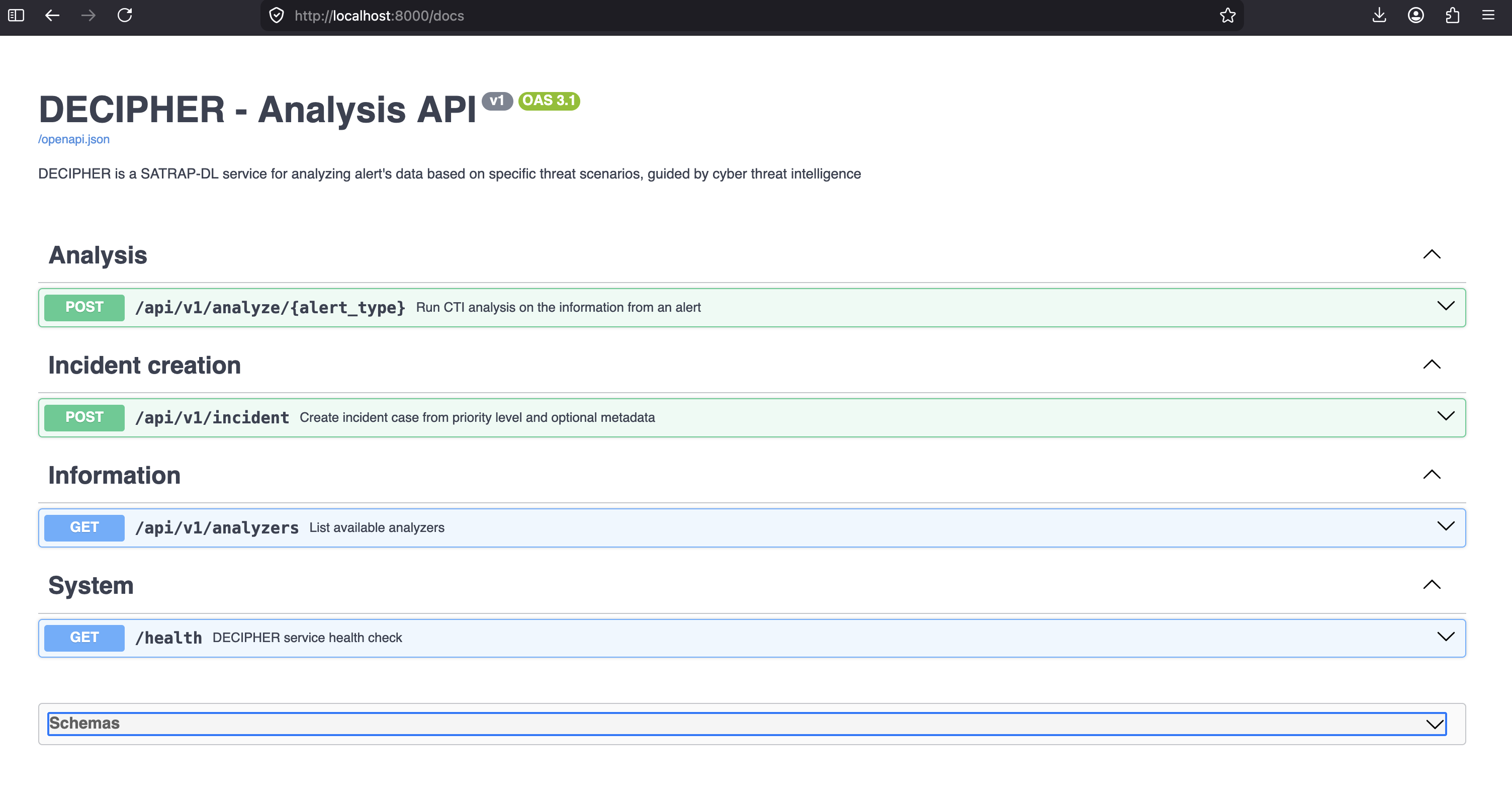

Test case step 2: Verify that DECIPHER REST API root endpoint provides the service version and identification details

- 0 = flawless: The root endpoint provides the expected JSON response with version and identification details.

Test case step 3: Verify that the DECIPHER API documentation is accessible and clearly indicates the version in the title and endpoint URLs.

- 0 = flawless: The API documentation is accessible at the expected URL and clearly shows the version in the title and endpoint URLs.

Defect summary description

Defect-free test execution, i.e., defect category: 0 = flawless

Text execution evidence

See screenshots and terminal output above.

Comments

N/A

Guide

- Defect category: 0 = flawless; 1 = insignificant defect; 2 = minor defect; 3 = major defect; 4 = critical defect

- Verification method (VM): Test (T), Review of design (R), Inspection (I), Analysis (A)

Parent links: TST-029 Retrieve software identification details

| Attribute | Value |

|---|---|

| test-date | 2026-05-07 |

| tester | IVS |

| defect-category | 0 = flawless |

| passed-steps | 3 |

| failed-steps | 0 |

| not-executed-steps | 0 |

| release-version | 1.0 |

| verification-method | T |