1.0 SONAR Software Design

Software design specifications for SONAR (SIEM-Oriented Neural Anomaly Recognition) subsystem.

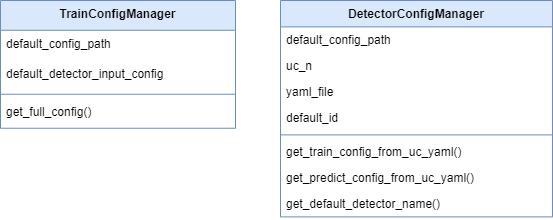

1.1 SONAR class structure and relationships SWD-022

Class diagram overview

The SONAR subsystem employs a modular class structure organized around configuration management, data access, and processing pipelines.

Core configuration classes

Scenario management classes

Configuration dataclasses summary

Implementation: sonar/config.py

| Dataclass | Key Fields | Purpose | ------ |

|---|---|---|---|

PipelineConfig |

wazuh, mvad, features, debug, shipping | Top-level pipeline configuration | |

WazuhIndexerConfig |

base_url, username, password, alerts_index_pattern | Wazuh Indexer connection | |

MVADConfig |

sliding_window, device, extra_params | MVAD engine parameters | |

FeatureConfig |

numeric_fields, bucket_minutes, categorical_top_k | Feature extraction configuration | |

DebugConfig |

enabled, data_dir, training_file, detection_file | Debug mode data sources | |

ShippingConfig |

enabled, scenario_id, indexer_url, index_name | Anomaly shipping configuration | |

UseCase |

name, description, training, detection, shipping | Scenario definition | |

TrainingScenario |

lookback_hours, numeric_fields, sliding_window | Training parameters | |

DetectionScenario |

mode, lookback_minutes, threshold, min_consecutive | Detection parameters |

Design patterns

| Pattern | Application | Benefit | ------ |

|---|---|---|---|

| Dataclasses | Configuration objects | Type safety, immutability, validation | |

| Factory Method | UseCase.from_yaml() |

Encapsulates YAML parsing logic | |

| Strategy | Data providers (Wazuh/Local) | Interchangeable data sources | |

| Facade | Engine wrapper | Simplifies MVAD library interface |

Related documentation

- Complete class diagram: docs/manual/sonar_docs/uml-diagrams.md

- Architecture guide: docs/manual/sonar_docs/architecture.md

Parent links: LARC-019 SONAR training pipeline sequence, LARC-020 SONAR detection pipeline sequence

1.2 SONAR feature engineering design SWD-023

Feature extraction module

Implementation: sonar/features.py

The feature engineering module transforms raw Wazuh alerts into time-series data suitable for multivariate anomaly detection.

Time-series bucketing algorithm

Algorithm:

- Parse timestamps from alerts

- Round timestamps to bucket boundaries (configurable interval)

- Group by bucket timestamp

- Aggregate numeric features within each bucket

- Return time-indexed DataFrame

Feature types

| Feature Type | Extraction Method | Example Fields |

|---|---|---|

| Numeric | Direct field extraction | rule.level, data.win.eventdata.processId |

| Categorical | Top-K encoding | agent.name, rule.id, data.srcip |

| Aggregated | Count/sum per bucket | Alert count, unique IPs |

| Derived | Computed features | Time-of-day, day-of-week |

Bucketing strategy

Raw Alerts (variable frequency)

↓

Time Buckets (fixed intervals: 1, 5, or 10 minutes)

↓

Aggregated Features (one row per bucket)

↓

Time-Series DataFrame (input to MVAD)

Example bucketing: - Bucket size: 5 minutes - Input: 1000 alerts over 1 hour - Output: 12 rows (one per 5-minute bucket)

Missing data handling

| Scenario | Strategy |

|---|---|

| Empty bucket | Fill with zeros or forward-fill previous value |

| Missing field | Use default value or skip feature |

| Sparse data | Interpolate or flag as anomalous |

Categorical encoding

Top-K frequency encoding:

- Count occurrences of each categorical value

- Keep top K most frequent values

- Map others to "other" category

- One-hot encode or label encode

Related documentation

- Feature configuration: docs/manual/sonar_docs/scenario-guide.md

- Architecture: docs/manual/sonar_docs/architecture.md

- Implementation: sonar/features.py

Parent links: LARC-019 SONAR training pipeline sequence, LARC-020 SONAR detection pipeline sequence

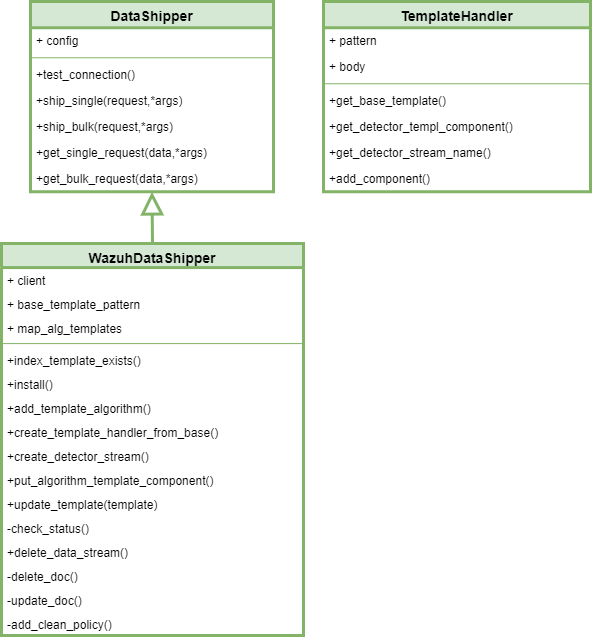

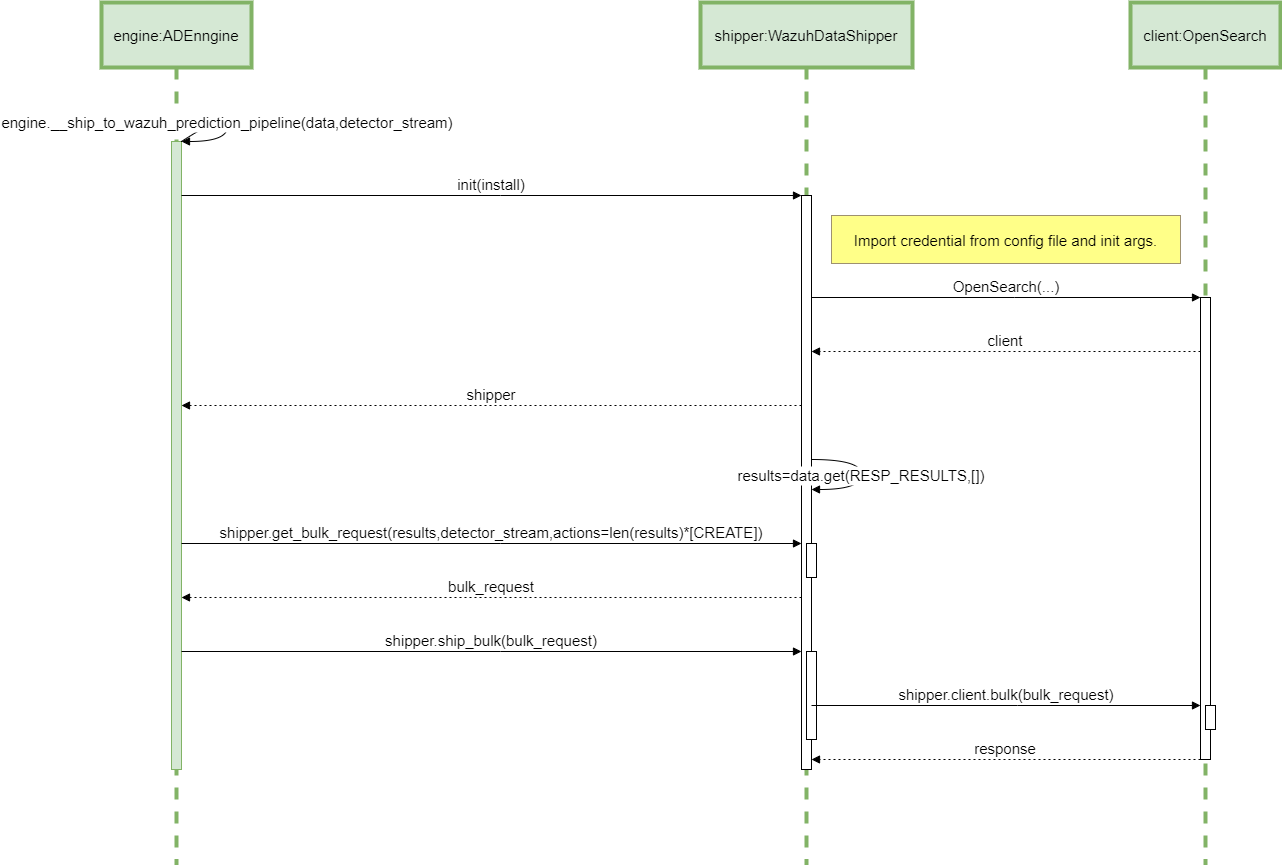

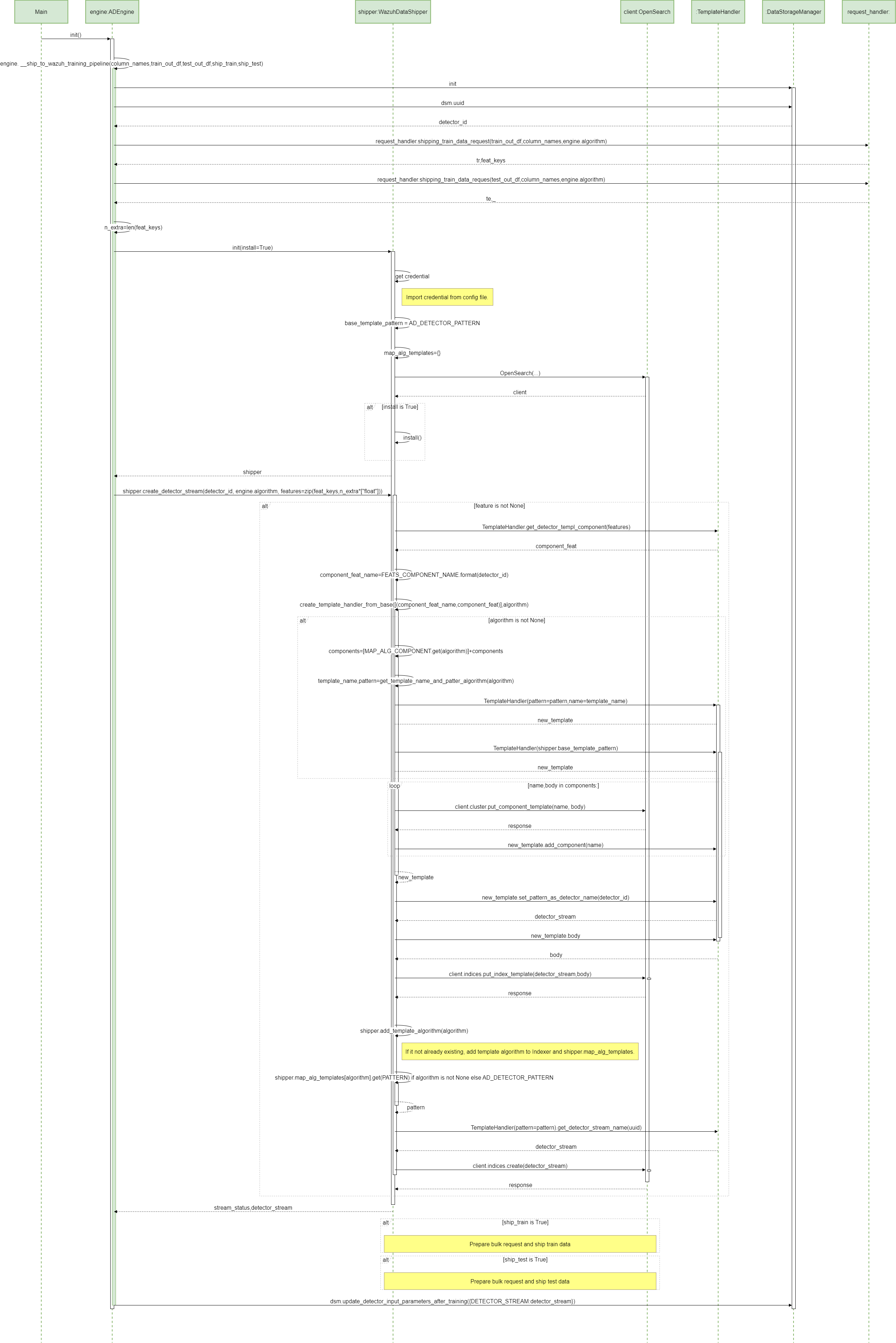

1.3 SONAR data shipping design SWD-024

Shipper module architecture

The shipper module (sonar/shipper/) manages the indexing of anomaly detection results to Wazuh data streams.

Component structure

sonar/shipper/

├── __init__.py

├── opensearch_shipper.py # Main shipper class

├── template_manager.py # Index template installation

└── bulk_processor.py # Bulk API operations

Data stream strategy

SONAR uses OpenSearch data streams for time-series storage:

| Data Stream | Purpose | Retention |

|---|---|---|

logs-sonar.anomalies-default |

Anomaly events | 30 days |

logs-sonar.scores-default |

Raw anomaly scores | 7 days |

logs-sonar.metrics-default |

Training/detection metrics | 90 days |

Bulk indexing design

class OpenSearchShipper:

def ship_anomalies(self, documents: List[dict]) -> BulkResult:

"""

Ships anomaly documents using bulk API.

Process:

1. Validate document structure

2. Add @timestamp and data_stream fields

3. Batch into chunks (500 documents/batch)

4. Execute bulk API request

5. Handle partial failures

6. Return success/failure counts

"""

Document format

Each anomaly document includes:

{

"@timestamp": "2026-02-04T12:00:00Z",

"event": {

"kind": "alert",

"category": ["intrusion_detection"],

"type": ["info"]

},

"sonar": {

"scenario_id": "baseline_scenario",

"model_name": "baseline_model_20260204",

"anomaly_score": 0.92,

"threshold": 0.85,

"window_start": "2026-02-04T11:55:00Z",

"window_end": "2026-02-04T12:00:00Z"

},

"tags": ["sonar", "anomaly"]

}

Error handling

| Error Type | Strategy | Recovery |

|---|---|---|

| Connection failure | Retry with exponential backoff | Queue for later |

| Document rejection | Log invalid docs | Continue with valid docs |

| Bulk partial failure | Retry failed documents | Track success rate |

| Template missing | Auto-install templates | Retry operation |

Integration with pipeline

The shipper is invoked from pipeline.py after post-processing:

Anomaly Detection → Post-Processing → Shipper → Data Streams

Related documentation

- Data shipping guide:

docs/manual/sonar_docs/data-shipping-guide.md

Parent links: LARC-019 SONAR training pipeline sequence, LARC-020 SONAR detection pipeline sequence

1.4 SONAR debug mode design SWD-025

Local data provider architecture

The debug mode enables offline testing and development without requiring a live Wazuh Indexer instance.

Interface compatibility

The LocalDataProvider implements the same interface as WazuhIndexerClient for transparent dependency injection:

Interface contract:

- Method:

fetch_alerts(start_time, end_time, filters=None) -> list[dict] - Returns: List of alert dictionaries matching time range

- Time filtering: Alerts filtered by

@timestampfield within[start_time, end_time] - Format handling: Supports JSON array, single object, and OpenSearch API response formats

Data source configuration

Debug mode is configured in scenario YAML:

debug:

enabled: true

data_dir: "./sonar/test_data/synthetic_alerts"

training_data_file: "normal_baseline.json"

detection_data_file: "with_anomalies.json"

JSON data formats supported

The provider handles multiple JSON formats:

-

JSON array (preferred):

json [{"@timestamp": "...", "rule": {...}}, ...] -

Single object:

json {"@timestamp": "...", "rule": {...}} -

OpenSearch API response:

json {"hits": {"hits": [{"_source": {...}}, ...]}}

Time filtering

Local data provider applies the same time filtering as Wazuh client:

- Parse

@timestampfrom each alert - Filter alerts within

[start_time, end_time] - Return filtered list

Test data structure

Test data files in sonar/test_data/synthetic_alerts/:

| File | Alerts | Purpose | Anomalies |

|---|---|---|---|

normal_baseline.json |

12,000 | Training data | None |

with_anomalies.json |

6,000 | Detection testing | Yes (injected) |

Dependency injection pattern

The CLI selects the appropriate provider based on debug configuration:

Selection logic:

- If

debug.enabled = truein scenario: instantiateLocalDataProviderwith debug config - Otherwise: instantiate

WazuhIndexerClientwith Wazuh config - Both providers expose identical interface for transparent substitution

Benefits

- No infrastructure: Test without Wazuh deployment

- Reproducibility: Consistent test data across runs

- Speed: No network latency

- Isolation: Test feature changes independently

Implementation reference

Primary implementation: sonar/local_data_provider.py

Related documentation:

- Debug mode guide:

docs/manual/sonar_docs/setup-guide.md - Data injection:

docs/manual/sonar_docs/data-injection-guide.md

Parent links: LARC-019 SONAR training pipeline sequence, LARC-020 SONAR detection pipeline sequence

2.0 RADAR Software Design

Software design specifications for RADAR subsystem.

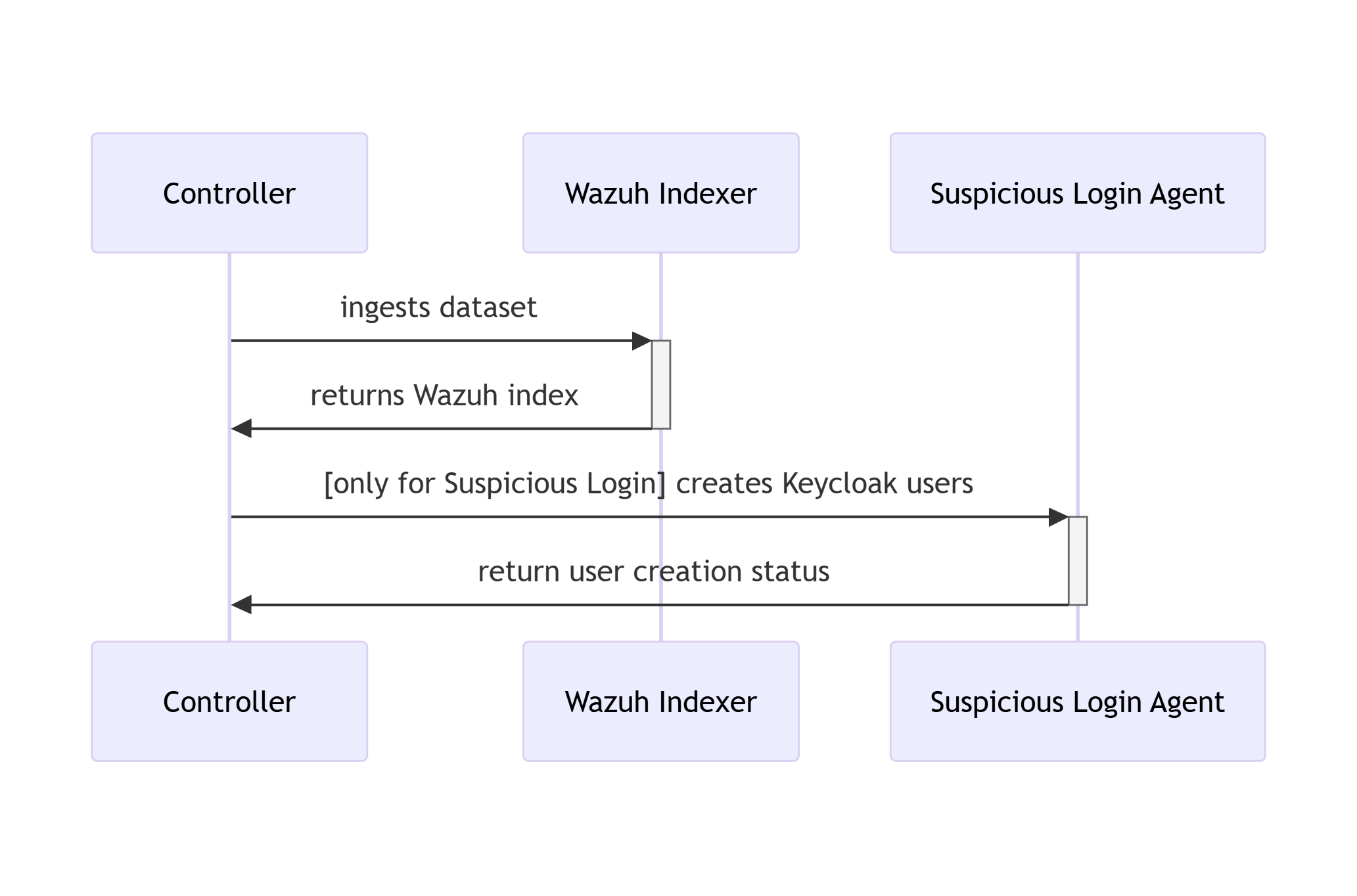

2.1 RATF: ingestion phase SWD-018

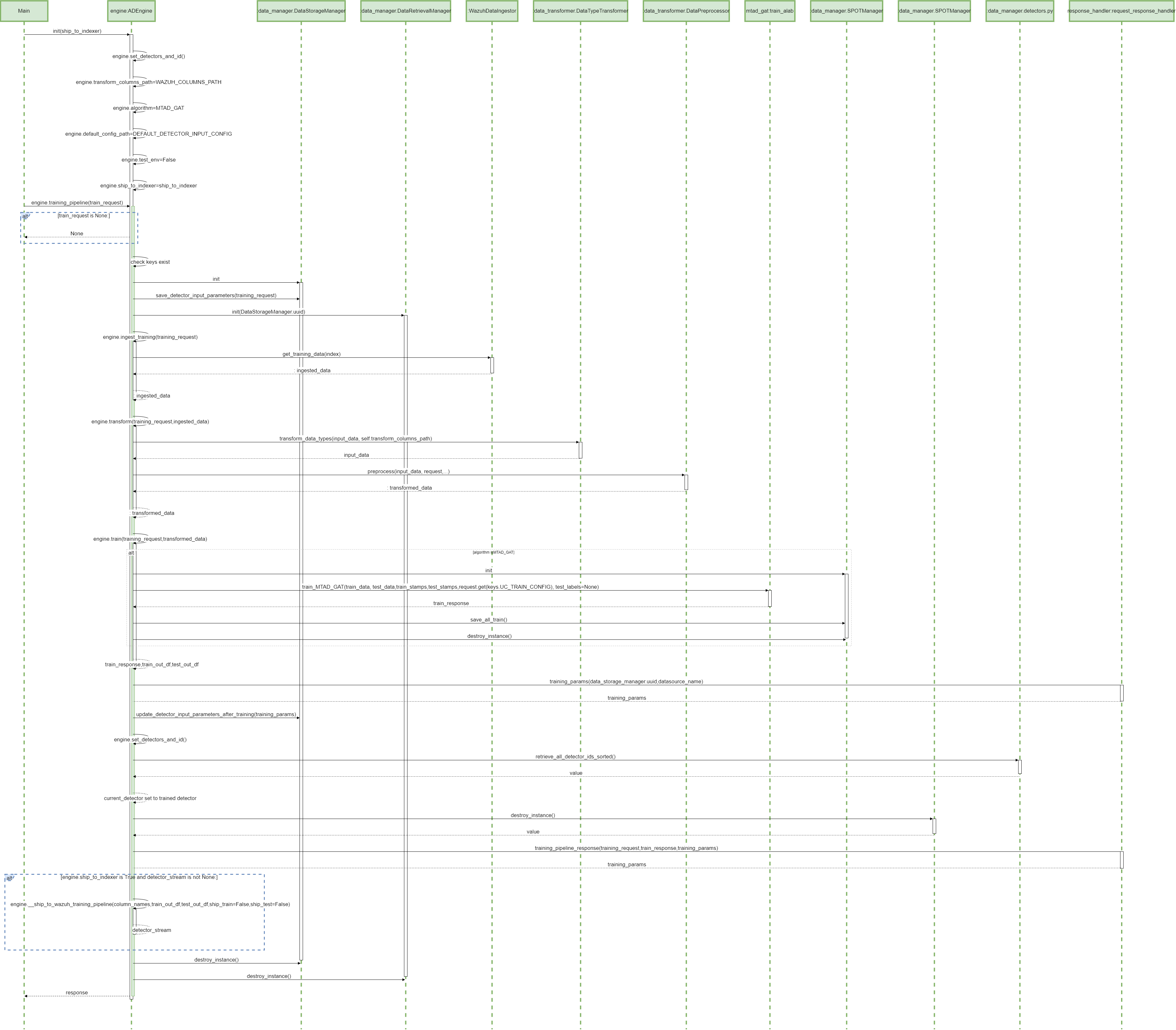

Sequence diagram of data ingestion and user setup in RATF

The diagram below depicts the sequence of actions orchestrated by the RADAR Automated Test Framework in ingestion phase, which - bulk ingests the corresponding dataset into Opensearch index. - and for Suspicious Login scenario creates users in Single Sign-On system.

Parent links: LARC-015 RADAR scenario setup flow

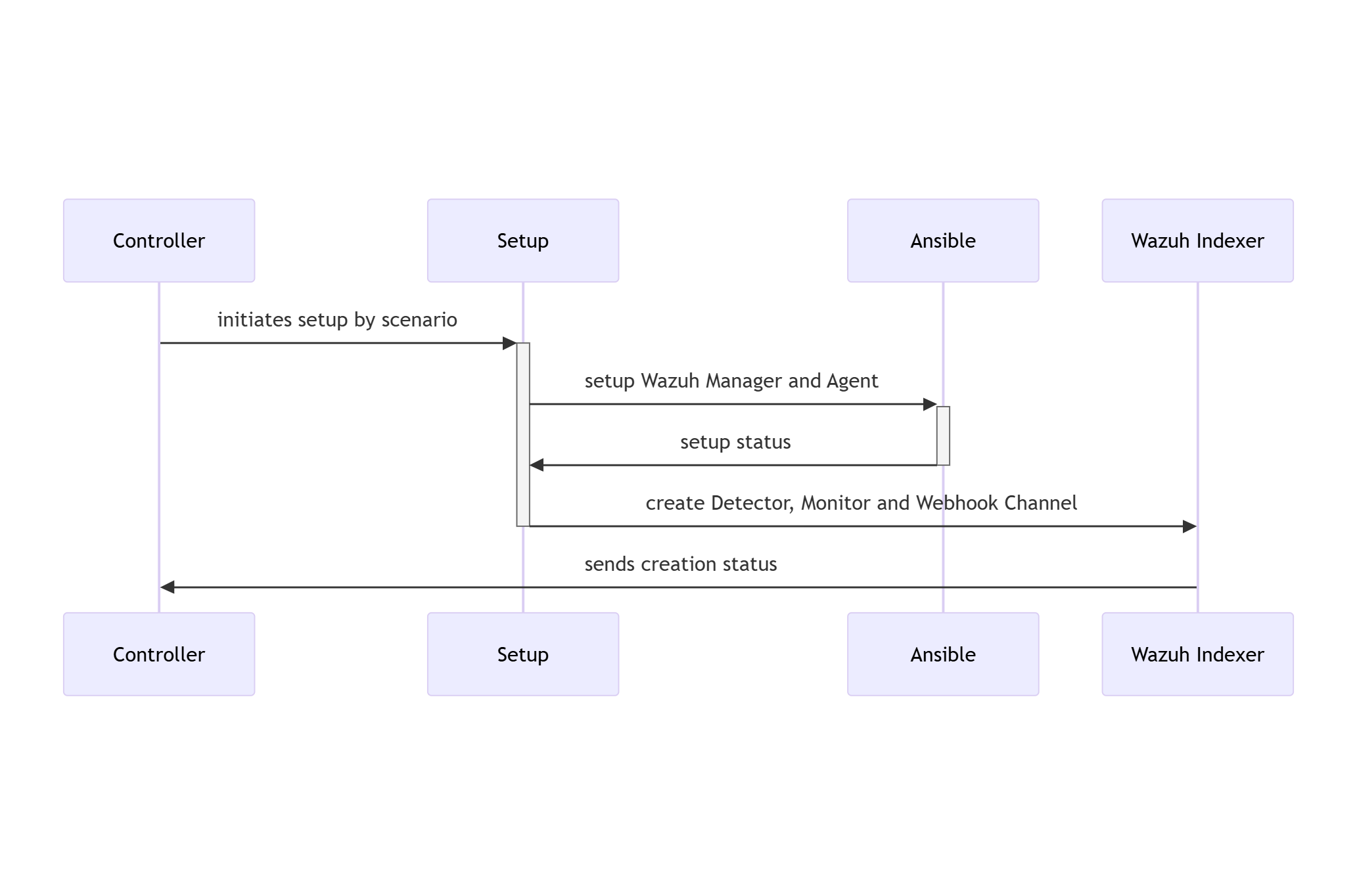

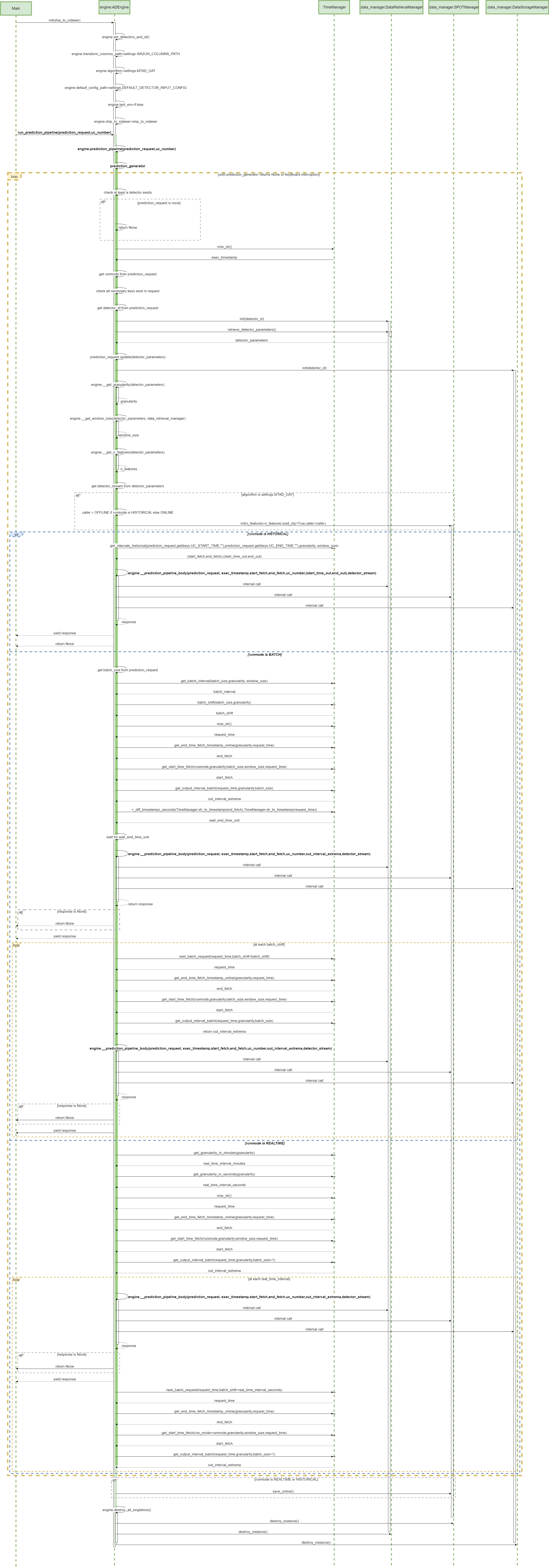

2.2 RATF: setup phase SWD-019

Sequence diagram of environment setup in RATF

The diagram below depicts the sequence of actions orchestrated by the RADAR Automated Test Framework in setup phase, which - sets up Docker environments by copying rules, active responses and setting needed permissions.

Parent links: LARC-015 RADAR scenario setup flow, LARC-016 RADAR active response flow

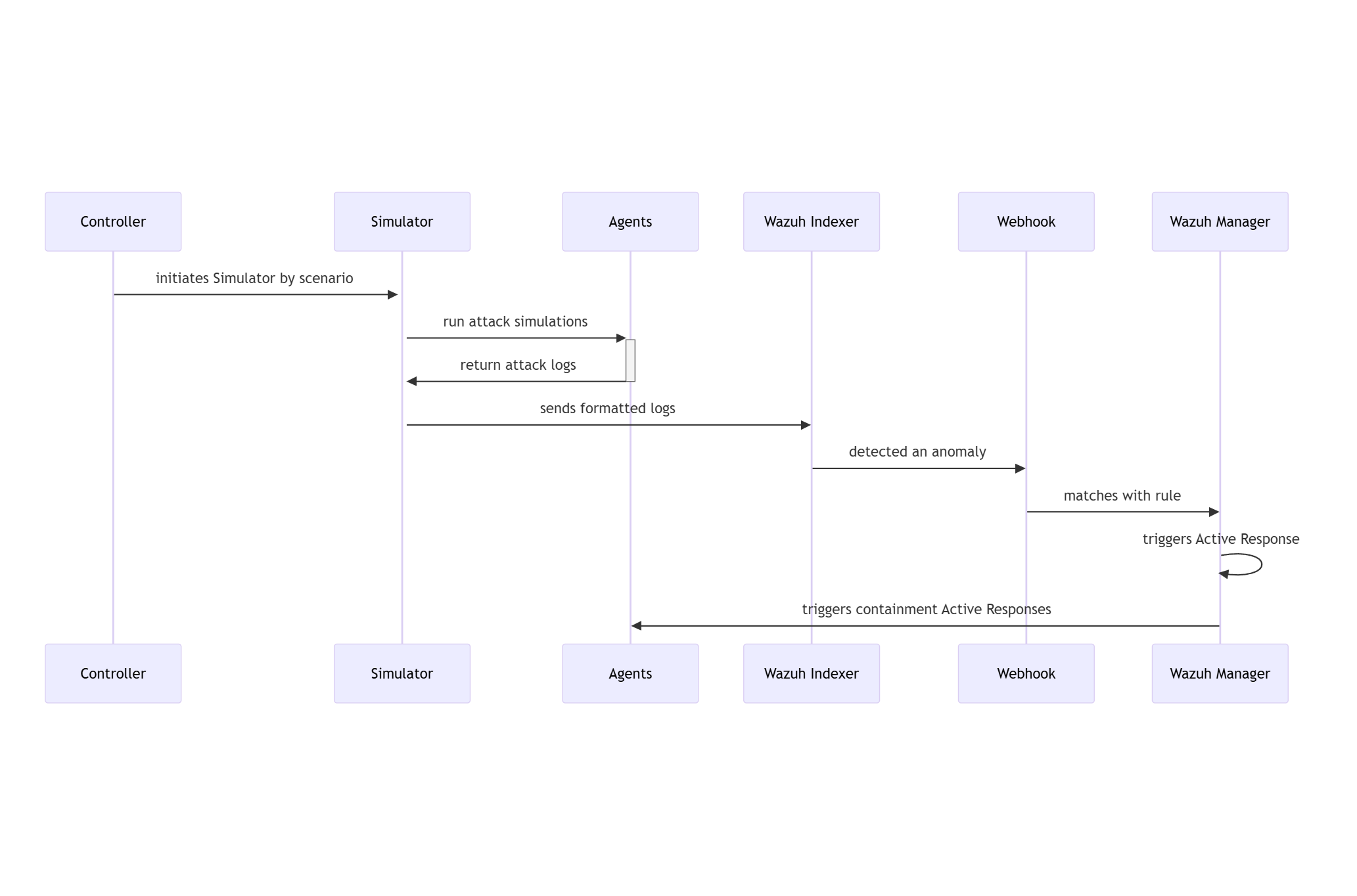

2.3 RATF: simulation phase SWD-020

Sequence diagram of threat simulation in RATF

The diagram below depicts the sequence of actions orchestrated by the RADAR Automated Test Framework in simulation phase, which - simulates the threat scenario in corresponding agent - feeds the resulted log to corresponding index in Opensearch.

Parent links: LARC-016 RADAR active response flow

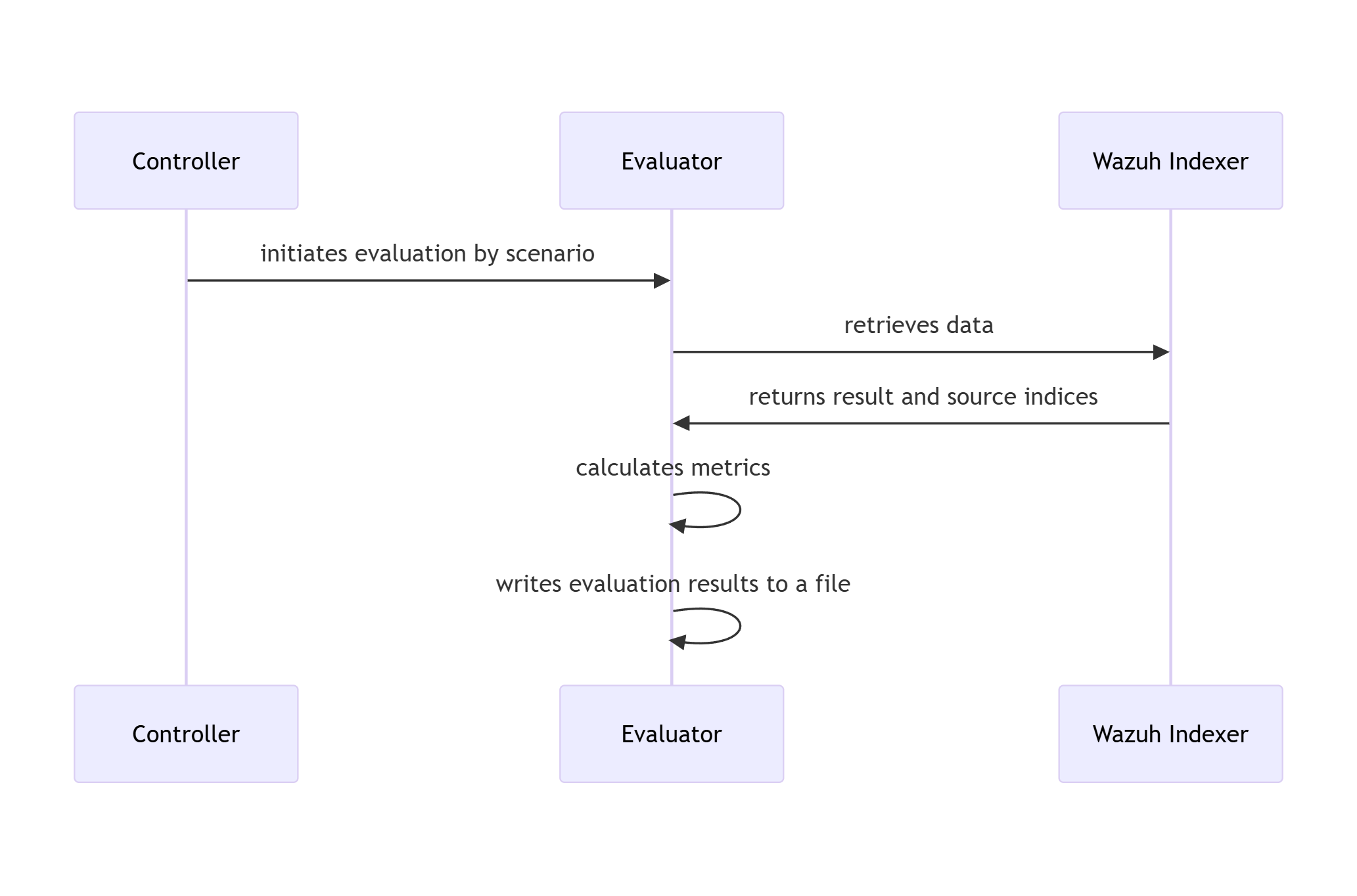

2.4 RATF: evaluation phase SWD-021

Sequence diagram of metrics evaluation in RATF

The diagram below depicts the sequence of actions orchestrated by the RADAR Automated Test Framework in evaluation phase, which - retrieves the events from corresponding index to scenario - calculates evaluation metrics by comparing with the dataset and simulation results.

Parent links: LARC-016 RADAR active response flow

2.5 RADAR risk engine implementation design SWD-026

This document specifies the implementation design for RADAR's risk-aware decision engine, which combines anomaly detection, signature-based detection, and cyber threat intelligence into a unified risk score that drives tiered automated responses.

Mathematical specification

Risk formula

The normalized risk score R ∈ [0,1] is computed as:

$$R = w_A \cdot A + w_S \cdot S + w_T \cdot T$$

Subject to the normalization constraint: $w_A + w_S + w_T = 1$

Component calculations

Anomaly intensity (A): $$A = G \times C$$

Where:

- G: Anomaly grade from detector (OpenSearch RCF or SONAR MVAD) $\in [0,1]$

- C: Confidence score from detector $\in [0,1]$

Signature risk (S): $$S = L \times I$$

Where:

- L: Likelihood value for scenario from configuration $\in [0,1]$

- I: Impact value for scenario from configuration $\in [0,1]$

CTI score (T):

$$T = 1 - \prod_{i=1}^{n} (1 - w_i)$$

Where $\omega_i$ is the weight for the i-th CTI indicator flag. This formula ensures:

- T = 0 when no CTI hits

- T approaches 1 as more indicators activate

- Non-linear growth reflects cumulative evidence strength

Default weight configuration

Default weights (overridable in ar.yaml):

DEFAULT_WEIGHTS = {

'w_ad': 0.4, # Behavioral detection: high information value

'w_sig': 0.4, # Signature detection: high precision

'w_cti': 0.2 # Threat intelligence: confirmatory evidence

}

Rationale:

- Behavioral and signature detection equally weighted as primary detection methods

- CTI provides confirmatory evidence, reducing false positives

- Weights sum to 1.0 for normalized output

Algorithm specification

Risk calculation algorithm

Input parameters:

anomaly_grade: AD output grade $\in [0,1]$confidence: AD output confidence $\in [0,1]$likelihood: Scenario likelihood $\in [0,1]$impact: Scenario impact $\in [0,1]$cti_indicators: List of (indicator_name, weight) tuplesweights: Dict with keys $\omega_{ad}, \omega_{sig}, \omega_{cti}$

Algorithm:

- Calculate component A: $A = \text{anomaly_grade} \times \text{confidence}$

- Calculate component S: $S = \text{likelihood} \times \text{impact}$

- Calculate component T using CTI product formula:

- Initialize $T = 1.0$

- For each indicator weight $w_i$: $T = T \times (1 - w_i)$

- Final: $T = 1 - T$

- Compute weighted risk: $R = w_{\text{ad}} \times A + w_{\text{sig}} \times S + w_{\text{cti}} \times T$

- Clamp to bounds: $R = \max(0, \min(1, R))$

Returns: Risk score R ∈ [0,1]

Tier determination algorithm

Inputs:

risk_score: Calculated risk $R \in [0,1]$thresholds: Dict with keys 'low' (default: 0.33) and 'high' (default: 0.66)

Mapping logic:

- If $R < \text{threshold}_{\text{low}}$: Tier = "low"

- Else if $R < \text{threshold}_{\text{high}}$: Tier = "medium"

- Else: Tier = "high"

Returns: Tier string ("low", "medium", or "high")

Configuration schema (ar.yaml)

# Scenario-specific configurations

scenarios:

geoip_detection:

ad:

rule_ids: []

signature:

rule_ids: ["100900", "100901"]

w_ad: 0.0

w_sig: 0.6

w_cti: 0.4

delta_signature_minutes: 1

signature_impact: 0.6

signature_likelihood: 0.8

tiers:

tier1_min: 0.0

tier1_max: 0.33

tier2_max: 0.66

allow_mitigation: true

mitigations_tier2:

- firewall-drop

mitigations_tier3:

- firewall-drop

log_volume:

ad:

rule_ids: ["100309"]

signature:

rule_ids: []

w_ad: 0.9

w_sig: 0.0

w_cti: 0.1

delta_ad_minutes: 10

signature_impact: 0.0

signature_likelihood: 0.0

tiers:

tier1_min: 0.0

tier1_max: 0.33

tier2_max: 0.66

allow_mitigation: true

mitigations_tier2: []

mitigations_tier3:

- terminate_service.sh

Implementation classes

RiskEngine class

from dataclasses import dataclass

from typing import Optional

@dataclass

class RiskInput:

"""Input data for risk calculation."""

anomaly_grade: float = 0.0

confidence: float = 0.0

likelihood: float

impact: float

cti_indicators: list[tuple[str, float]]

@dataclass

class RiskOutput:

"""Risk calculation result."""

risk_score: float

tier: int # 0, 1, 2, or 3

components: dict[str, float] # A, S, T values for transparency

class RiskEngine:

"""Risk calculation engine."""

def __init__(self, config: dict):

"""

Initialize risk engine with configuration.

Args:

config: Risk parameters from ar.yaml

"""

self.weights = config.get('weights', DEFAULT_WEIGHTS)

self.tier_thresholds = config.get('tier_thresholds', DEFAULT_THRESHOLDS)

# Validate weights sum to 1.0

weight_sum = sum(self.weights.values())

if not np.isclose(weight_sum, 1.0):

raise ValueError(f"Weights must sum to 1.0, got {weight_sum}")

def calculate(self, input_data: RiskInput) -> RiskOutput:

"""Calculate risk score and tier."""

# Calculate components

A = input_data.anomaly_grade * input_data.confidence

S = input_data.likelihood * input_data.impact

T = self._calculate_cti_score(input_data.cti_indicators)

# Weighted combination

R = (self.weights['w_ad'] * A +

self.weights['w_sig'] * S +

self.weights['w_cti'] * T)

# Determine tier

tier = self._determine_tier(R)

return RiskOutput(

risk_score=R,

tier=tier,

components={'A': A, 'S': S, 'T': T}

)

def _calculate_cti_score(self, indicators: list[tuple[str, float]]) -> float:

"""Calculate CTI score using product formula."""

if not indicators:

return 0.0

product = 1.0

for _, weight in indicators:

product *= (1.0 - weight)

return 1.0 - product

def _determine_tier(self, risk_score: float) -> int:

"""Map risk score to tier (0, 1, 2, or 3)."""

if risk_score < self.tier_thresholds['tier1_min']:

return 0

elif risk_score < self.tier_thresholds['tier1_max']:

return 1

elif risk_score < self.tier_thresholds['tier2_max']:

return 2

else:

return 3

Testing requirements

Unit test coverage

Test cases must cover:

- Boundary conditions: R = 0, R = 1, tier thresholds

- Weight validation: Sum to 1.0, individual bounds $[0,1]$

- CTI aggregation: Empty list, single indicator, multiple indicators

- Tier mapping: Each tier range, threshold boundaries

- Component isolation: Each of A, S, T independently

Example test case specification

Test: Medium tier risk calculation

Given:

- Weights: $\omega_{ad}=0.4, \omega_{sig}=0.4, \omega_{cti}=0.2$

- Tier thresholds: tier1_min=0.0, tier1_max=0.33, tier2_max=0.66

- Inputs: anomaly_grade=0.62, confidence=0.74, likelihood=0.4, impact=0.9

- CTI indicators: [("ip_blacklisted", 0.6), ("domain_malicious", 0.4)]

Expected:

- Component A = 0.4588

- Component S = 0.36

- Component T = 0.76

- Risk score R = 0.4795 (within [0.47, 0.48])

- Tier = 2

Integration points

- Input: Alert data from Wazuh (via stdin JSON)

- Configuration: ar.yaml loaded at active response initialization

- Output: Risk score and tier logged to active-responses.log

- Action planning: Tier drives action selection in radar_ar.py

Performance requirements

- Algorithmic complexity: O(n) where n = number of CTI indicators (typically < 10)

- No external API calls during calculation (CTI queried earlier in pipeline)

- Target execution time: < 10ms (suitable for synchronous active response)

Implementation references

Primary implementation: radar/scenarios/active_responses/radar_ar.py

Configuration files:

- radar/scenarios/active_responses/ar.yaml

- Example scenarios: radar/scenarios/

Related documentation:

- Risk calculation math: docs/manual/radar_docs/radar-risk-math.md

Parent links: LARC-021 RADAR risk engine calculation flow, LARC-026 RADAR active response decision pipeline

2.6 RADAR active response script design SWD-027

This document specifies the software design for radar_ar.py, the main active response script that orchestrates risk-aware automated threat response in RADAR.

Architecture overview

The script implements a pipeline architecture with pluggable scenario strategies, following these design patterns:

- Strategy Pattern: Scenario-specific behavior via BaseScenario subclasses

- Registry Pattern: Scenario identification via configured rule mappings

- Pipeline Pattern: Sequential stages with error handling at each step

- Data Classes: Type-safe configuration and data structures

Class structure

RadarActiveResponse (main orchestrator)

class RadarActiveResponse:

"""Main orchestrator for RADAR active response pipeline."""

def __init__(self, config_path: str = "/var/ossec/active-response/ar.yaml"):

"""

Initialize active response system.

Args:

config_path: Path to ar.yaml configuration file

Behavior:

- Loads configuration from ar.yaml

- Initializes ScenarioIdentifier with rule mappings

- Creates API clients from environment variables:

* WazuhApiClient (WAZUH_API_URL, WAZUH_AUTH_USER, WAZUH_AUTH_PASS)

* OpenSearchClient (OS_URL, OS_USER, OS_PASS, OS_VERIFY_SSL)

* DecipherClient (optional: DECIPHER_BASE_URL, DECIPHER_VERIFY_SSL)

- Sets up logger for audit trail

- Initializes decision cache for idempotency

"""

pass

def run(self) -> int:

"""

Execute the complete active response pipeline.

Returns:

Exit code: 0 (success), 1 (no match), 2 (error)

Pipeline Stages:

1. Read alert from stdin (JSON format)

2. Identify scenario via rule ID mapping

3. Check idempotency (SHA256 hash of alert_id:timestamp:scenario)

4. Get scenario-specific handler (BaseScenario subclass)

5. Collect context (OpenSearch query for correlated events)

6. CTI enrichment (query SATRAP DECIPHER for IOC reputation)

7. Calculate risk score (weighted formula based on detection type)

8. Plan actions (tier-based selection from ar.yaml)

9. Execute actions (Wazuh AR, email, case creation)

10. Audit log (structured JSON to active-responses.log)

Error Handling:

- Returns 1 if no alert or no scenario match

- Returns 0 if duplicate decision (idempotency check)

- Returns 2 on critical error (logged with stack trace)

"""

pass

def _read_alert_from_stdin(self) -> dict | None:

"""Read and parse Wazuh alert from stdin. Returns None on empty input or JSON parse error."""

pass

def _generate_decision_id(self, alert: dict, scenario_name: str) -> str:

"""

Generate unique decision ID for idempotency.

Formula: SHA256(alert_id:timestamp:scenario_name)[:16]

"""

pass

def _collect_context(

self,

alert: dict,

scenario: 'BaseScenario',

scenario_context: dict

) -> dict:

"""

Query OpenSearch for correlated events and extract IOCs.

Behavior:

1. Get time window from scenario handler (scenario.get_time_window)

2. Get effective agent filter (scenario.get_effective_agent)

3. Build OpenSearch query with time range and agent filter

4. Execute query on wazuh-alerts-* index

5. Extract IOCs from alert + correlated events

Returns:

dict with keys: window_start, window_end, effective_agent,

correlated_events, iocs

"""

pass

def _extract_iocs(self, alert: dict, events: list[dict]) -> dict:

"""

Extract indicators of compromise from alert and events.

IOC Categories:

- ips: srcip, dstip, src_ip, dst_ip fields

- users: srcuser, dstuser, user, username fields

- hashes: md5, sha1, sha256 fields

- domains: url, hostname, domain fields

Returns:

dict[str, list[str]] with IOCs grouped by category

"""

pass

ScenarioIdentifier (registry pattern)

class ScenarioIdentifier:

"""Maps Wazuh rule IDs to RADAR scenarios."""

def __init__(self, config: dict):

"""

Initialize scenario identifier with configuration.

Args:

config: Parsed ar.yaml configuration

Behavior:

Builds reverse mapping from rule IDs to scenario contexts.

Mapping includes both signature and AD rule IDs.

"""

pass

def identify(self, alert: dict) -> dict | None:

"""

Identify scenario for given alert.

Args:

alert: Wazuh alert dictionary

Returns:

Scenario context dict with keys: name, detection, config, alert

Returns None if rule ID has no matching scenario

Lookup Logic:

- Extract rule.id from alert

- Search rule_to_scenario mapping

- Return scenario context if found

"""

pass

BaseScenario (strategy pattern)

from abc import ABC, abstractmethod

from typing import Tuple

from datetime import datetime

class BaseScenario(ABC):

"""Base class for scenario-specific behavior."""

def get_time_window(self, alert: dict, config: dict) -> Tuple[datetime, datetime]:

"""

Determine time window for context collection.

Default Logic:

- Parse alert timestamp (ISO 8601)

- If AD-based: window = [timestamp - delta_ad_minutes, timestamp]

(default delta_ad_minutes: 10)

- If signature-based: window = [timestamp - delta_signature_minutes, timestamp]

(default delta_signature_minutes: 1)

Returns:

(window_start, window_end) as datetime tuple

Subclasses can override for custom logic.

"""

pass

def get_effective_agent(self, alert: dict, scenario_context: dict) -> str | None:

"""

Determine agent filter for context queries.

Default Logic:

- AD-based: return None (query all agents for high-cardinality detection)

- Signature-based: return alert.agent.name (scope to triggering agent)

Subclasses can override for scenario-specific filtering.

"""

pass

def extract_ad_scores(self, alert: dict) -> Tuple[float, float]:

"""

Extract anomaly grade and confidence from alert.

Default Fields:

- data.anomaly_grade (float)

- data.confidence (float)

Returns:

(grade, confidence) tuple

Subclasses override for scenario-specific field names.

"""

pass

class GeoIPScenario(BaseScenario):

"""Scenario handler for GeoIP detection (uses default behavior)."""

pass

class LogVolumeScenario(BaseScenario):

"""

Scenario handler for log volume detection.

Overrides:

extract_ad_scores(): Handles alternate field names (grade, conf)

"""

pass

WazuhApiClient

from typing import List, Dict

class WazuhApiClient:

"""Client for Wazuh Manager API interactions."""

def __init__(self, url: str, user: str, password: str):

"""

Initialize Wazuh API client.

Args:

url: Wazuh Manager API URL

user: Authentication username

password: Authentication password

"""

pass

def dispatch_active_response(

self,

agent_id: str,

command: str,

arguments: List[str]

) -> Dict:

"""

Dispatch active response command to agent.

Args:

agent_id: Target agent ID (e.g., '001')

command: Active response command name (e.g., 'firewall-drop')

arguments: Command arguments (e.g., ['192.168.1.100', 'srcip', '3600'])

Behavior:

1. Authenticate if no token cached (POST /security/user/authenticate)

2. Build payload with command, arguments, alert fields

3. PUT /active-response with agents_list and wait_for_complete params

4. Return API response JSON

Returns:

API response dict (includes execution status)

"""

pass

Configuration-driven behavior

All scenario-specific parameters are externalized to ar.yaml:

- Rule ID mappings

- Time window deltas

- Risk calculation weights

- Tier thresholds

- Action enable flags

This allows operational tuning without code changes.

Error handling strategy

- Transient errors: Retry with exponential backoff (OpenSearch, CTI queries)

- Non-critical errors: Log and continue (email send failure doesn't block case creation)

- Critical errors: Abort pipeline, log detailed error, return exit code 2

- All errors: Written to audit log for post-incident analysis

Performance characteristics

- Typical execution time: 100-500ms (depends on CTI query count)

- Memory footprint: < 50MB (mostly alert/event data)

- Suitable for: Synchronous Wazuh active response execution

Integration points

- Input: Wazuh alert JSON via stdin

- Output: Exit code (0/1/2), audit log to

/var/ossec/logs/active-responses.log - External APIs: Wazuh Manager, OpenSearch, SATRAP DECIPHER, SMTP

Data structures

Risk calculation formula

For anomaly detection alerts:

risk_score = (anomaly_grade × w_grade) + (confidence × w_confidence) + (cti_score × w_cti)

For signature-based alerts:

risk_score = (rule_level × w_level) + (cti_score × w_cti)

Weights and tier thresholds defined per scenario in ar.yaml.

Action planning logic

IF tier == 0 THEN

actions = []

ELSEIF tier == 1 THEN

actions = [send_email, decipher_incident]

ELSEIF tier == 2 THEN

actions = [send_email, decipher_incident] + (mitigations_tier2 if allow_mitigation)

ELSE # tier == 3

actions = [send_email, decipher_incident] + (mitigations_tier3 if allow_mitigation)

DECIPHER incident creation runs before action planning (in run()), gated on tier >= 1 and health check.

Audit log schema

{

"timestamp": "ISO8601",

"decision_id": "SHA256[:16]",

"scenario": "scenario_name",

"detection_type": "ad|signature",

"risk_score": 0.0,

"tier": 1,

"actions_planned": ["action1", "action2"],

"actions_executed": {"action1": {"status": "success|failure", "details": {}}},

"context": {"window_start": "ISO8601", "window_end": "ISO8601", "event_count": 0},

"cti": {"iocs_queried": 0, "malicious_count": 0},

"alert": {"id": "", "rule": {"id": "", "description": ""}}

}

Implementation

This specification describes the design and interfaces for the RADAR active response system. The actual implementation can be found in:

- Main Script: radar/scenarios/active_responses/radar_ar.py

- Configuration: radar/scenarios/active_responses/ar.yaml

- User Documentation: docs/manual/radar_docs/radar-active-response.md

Refer to these files for complete implementation details, including:

- Full method implementations with error handling

- Configuration file structure and examples

- CTI integration logic

- Email templating

- Case management integration

Parent links: LARC-026 RADAR active response decision pipeline

2.7 RADAR detector module design SWD-028

This document specifies the software design for the detector creation module (detector.py), which interfaces with the OpenSearch Anomaly Detection plugin to create and manage RCF-based anomaly detectors.

Module overview

The detector module provides functions to:

- Search for existing detectors by name (idempotent operation)

- Build detector specifications from scenario configurations

- Create detectors via OpenSearch AD API

- Start detectors to begin anomaly analysis

Function specifications

find_detector_id

def find_detector_id(detector_name: str, os_client: OpenSearchClient) -> str | None:

"""Search for existing detector by name in .opendistro-anomaly-detectors index.

Args:

detector_name: Detector name (e.g., "log_volume_DETECTOR")

os_client: OpenSearch client instance

Returns:

Detector ID if found, None otherwise

"""

pass

detector_spec

def detector_spec(scenario_config: Dict, scenario_name: str) -> Dict:

"""Build OpenSearch AD detector specification from scenario configuration.

Args:

scenario_config: Scenario configuration from config.yaml

scenario_name: Name of the scenario

Returns:

Detector specification dictionary with required and optional fields

"""

pass

create_detector

def create_detector(spec: Dict, os_client: OpenSearchClient) -> str:

"""Create anomaly detector via OpenSearch AD plugin API.

Args:

spec: Detector specification dictionary

os_client: OpenSearch client instance

Returns:

Detector ID of created detector

"""

pass

start_detector

def start_detector(detector_id: str, os_client: OpenSearchClient) -> None:

"""Start anomaly detector to begin analysis.

Args:

detector_id: ID of detector to start

os_client: OpenSearch client instance

"""

pass

Main orchestration

Entry point: main() - Orchestrates detector creation pipeline.

Pipeline stages:

- Validate CLI arguments (requires scenario name)

- Load scenario configuration from config.yaml

- Initialize OpenSearch client from environment variables

- Search for existing detector (idempotent check)

- Create detector if not found

- Start detector

- Output detector ID to stdout

Environment variables: OS_URL, OS_USER, OS_PASS, OS_VERIFY_SSL

Exit codes: 0 (success), 1 (error)

Configuration schema

Scenario configuration parameters used by detector_spec():

Required: features (list of feature definitions with aggregation queries)

Optional: index_prefix, time_field, detector_interval, delay_minutes, categorical_field, shingle_size, result_index

Defaults: time_field="@timestamp", detector_interval=5, delay_minutes=1, shingle_size=8

See radar/config.yaml for complete examples.

OpenSearch AD API endpoints

Detector creation: POST /_plugins/_anomaly_detection/detectors

- Required fields:

name,time_field,indices,feature_attributes,detection_interval - Optional fields:

category_field,shingle_size,result_index,window_delay - Returns:

{"_id": "detector_id", ...}

Detector start: POST /_plugins/_anomaly_detection/detectors/{id}/_start

- No request body

- Returns:

{"_id": "detector_id", ...}on success

See OpenSearch AD plugin documentation for complete API schema.

Error handling

- Detector already exists: find_detector_id returns existing ID, creation skipped

- Invalid configuration: Validation raises ConfigException before API call

- API errors: OpenSearchException raised with error details

- Network errors: Retry with exponential backoff (3 retries, max 10s delay)

Integration

Executed in run-radar.sh pipeline to create and start detector. Outputs detector ID to stdout for use by monitor.py.

Implementation

This specification describes the design and interfaces for the RADAR detector creation module. See these files for complete implementation:

- radar/anomaly_detector/detector.py - Full implementation

- radar/config.yaml - Configuration examples

- docs/manual/radar_docs/radar-run-ad.md - User documentation

- Logging and debugging output

Parent links: LARC-022 RADAR detector creation workflow

2.8 RADAR monitor and webhook module design SWD-029

This document specifies the detailed design of the monitor and webhook Python modules used in RADAR's anomaly detection workflow. These modules create and configure OpenSearch monitors and webhook notification channels.

Module overview

The RADAR anomaly detector subsystem consists of two primary modules:

- webhook.py: Manages OpenSearch notification channel destinations for webhook endpoints

- monitor.py: Creates OpenSearch alerting monitors that evaluate detector results and trigger webhook notifications

webhook.py class structure

Key functions

| Function | Purpose | Returns |

|---|---|---|

notif_list() |

Query all notification configurations | List of notification configs |

notif_find_id(name, url) |

Search for existing webhook by name and URL | Webhook destination ID or None |

notif_create(name, url, description) |

Create new webhook destination | Webhook destination ID |

ensure_webhook(name, url, description) |

Idempotent webhook creation (find or create) | Webhook destination ID |

monitor.py class structure

Monitor creation sequence

Monitor payload structure

The monitor payload includes:

Schedule: Evaluation frequency

- Default: Uses

detector_intervalfrom scenario config - Override:

monitor_intervalif specified - Unit: MINUTES

Search Input: Query against detector result index

- Index pattern:

{result_index}from scenario - Time range: Last N minutes (where N = interval)

- Filter:

detector_idmatches created detector - Aggregation:

max(anomaly_grade)

Trigger Condition (Painless script):

return ctx.results != null &&

ctx.results.length > 0 &&

ctx.results[0].aggregations.max_anomaly_grade.value > {grade_threshold} &&

ctx.results[0].hits.hits[0]._source.confidence > {confidence_threshold}

Webhook Action Message:

{

"monitor": {"name": "{{ctx.monitor.name}}"},

"trigger": {"name": "{{ctx.trigger.name}}"},

"entity": "{{ctx.results.0.hits.hits.0._source.entity.0.value}}",

"periodStart": "{{ctx.periodStart}}",

"periodEnd": "{{ctx.periodEnd}}",

"anomaly_grade": "{{ctx.results.0.hits.hits.0._source.anomaly_grade}}",

"anomaly_confidence": "{{ctx.results.0.hits.hits.0._source.confidence}}"

}

Configuration parameters

From config.yaml scenario section:

| Parameter | Default | Description |

|---|---|---|

monitor_interval |

detector_interval |

Monitor evaluation frequency (minutes) |

detector_interval |

5 | Detector run interval (minutes) |

anomaly_grade_threshold |

0.2 | Minimum anomaly grade to trigger (0-1) |

confidence_threshold |

0.2 | Minimum confidence to trigger (0-1) |

result_index |

opensearch-ad-plugin-result-{scenario} |

Detector result index pattern |

monitor_name |

{scenario}-monitor |

Monitor name |

trigger_name |

{scenario}-trigger |

Trigger name |

From .env file:

| Variable | Required | Description |

|---|---|---|

OS_URL |

Yes | OpenSearch endpoint URL |

OS_USER |

No | OpenSearch username (if auth enabled) |

OS_PASS |

No | OpenSearch password |

OS_VERIFY_SSL |

No | SSL certificate verification (default: true) |

WEBHOOK_NAME |

No | Webhook destination name (default: "RADAR Webhook") |

WEBHOOK_URL |

Yes | Webhook endpoint URL (e.g., http://manager:8080/notify) |

Error handling

Both modules implement robust error handling:

- Connection errors: HTTP status validation with detailed error messages

- Missing configuration: Explicit validation of required environment variables

- API failures: Graceful exit with status codes and response text

- Idempotency:

find_monitor_id()andnotif_find_id()check for existing resources

Integration with RADAR workflow

run-radar.shexecutesdetector.pyto create OpenSearch AD detector- Detector ID is passed to

monitor.pyas command-line argument monitor.pycallswebhook.ensure_webhook()to get/create notification channelmonitor.pybuilds monitor payload with detector ID and webhook destination ID- Monitor is created and begins evaluating detector results at configured intervals

- When anomalies exceed thresholds, monitor triggers webhook POST to configured endpoint

Parent links: LARC-023 RADAR monitor and webhook workflow

2.9 RADAR helper module class design SWD-030

This document specifies the detailed class design of the RADAR Helper Python daemon that provides real-time log enrichment for geographic and behavioral anomaly detection on Wazuh agents.

Module overview

The RADAR Helper (radar-helper.py) is a multi-threaded Python daemon service that:

- Monitors authentication logs (SSH) and Apache HTTP access logs in real-time

- Enriches log events with geographic metadata (country, region, city, coordinates)

- Calculates behavioral indicators for SSH authentication (geographic velocity, ASN novelty, country changes)

- Writes enriched logs for Wazuh agent ingestion

Class hierarchy

Module-level elements

Constants

| Constant | Value | Description |

|---|---|---|

DEBUG_LOG |

/var/log/radar-helper-debug.log |

Path for the shared debug log file |

Globals

| Name | Type | Description |

|---|---|---|

RADAR_LOG |

RadarLogger |

Module-level singleton providing the shared debug logger and output logger factory |

Helper functions

parse_event_ts()

Parses a timestamp string from a log line header into a Unix epoch float. Supports two formats:

- ISO 8601 (e.g.,

2024-01-15T10:30:00+01:00): parsed viadatetime.fromisoformat() - Syslog (e.g.,

Jan 15 10:30:00): parsed using a regex and current system year

Falls back to time.time() if the string matches neither format.

parse_event_ts(ts_str: str) -> float

This function is used by AuthLogWatcher.handle_line() to ensure that behavioral indicators such as geographic velocity are computed against the actual event time recorded in the log, rather than the processing time.

RadarLogger class

Encapsulates all logger configuration and acts as a factory for output loggers. A module-level singleton (RADAR_LOG) is created at import time.

Attributes

| Attribute | Type | Description |

|---|---|---|

debug_path |

str | Path to the debug log file |

_debug_logger |

logging.Logger | Configured WatchedFileHandler-based debug logger |

Methods

| Method | Parameters | Returns | Description |

|---|---|---|---|

__init__() |

debug_path | - | Initialize and build the debug logger |

_build_debug_logger() |

- | Logger | Create a WatchedFileHandler logger at DEBUG level with timestamp formatting |

build_out_logger() (static) |

name, out_path | Logger | Create an INFO-level WatchedFileHandler logger writing plain messages |

debug (property) |

- | Logger | Returns _debug_logger |

Design notes

build_out_logger() is a static method so that BaseLogWatcher subclasses can call it without needing an instance reference, while still having the logger setup logic centralized in one place. Both _build_debug_logger() and build_out_logger() are idempotent: if a handler for the target path already exists on the logger, a second handler is not added.

BaseLogWatcher class

Abstract base class providing tail-style log monitoring with log rotation handling. Extends threading.Thread.

Attributes

| Attribute | Type | Description |

|---|---|---|

in_path |

str | Input log file path to monitor |

out_path |

str | Output log file path for enriched logs |

logger |

logging.Logger | Output logger, obtained from RadarLogger.build_out_logger() |

debug |

logging.Logger | Debug logger, obtained from radar_logger.debug |

_stop_evt |

threading.Event | Thread stop signal |

Constructor

__init__(in_path, out_path, logger_name, radar_logger: RadarLogger)

The constructor delegates logger creation to RadarLogger: the output logger is obtained via radar_logger.build_out_logger(logger_name, out_path) and the debug logger via radar_logger.debug.

Methods

| Method | Parameters | Returns | Description |

|---|---|---|---|

stop() |

- | void | Set _stop_evt to signal graceful thread termination |

tail_follow() |

- | Iterator[str] | Generator yielding new log lines with inode-based rotation detection |

run() |

- | void | Main thread loop: iterates tail_follow() and calls handle_line() per line |

handle_line() |

line | void | Abstract: process a single log line; must be overridden in subclasses |

Key features

Inode rotation detection:

st = os.stat(self.in_path)

if st.st_ino != ino:

# File was rotated, reopen

nf = open(self.in_path, "r")

f.close()

f = nf

ino = os.fstat(f.fileno()).st_ino

Graceful shutdown: The thread runs as a daemon. The stop event allows clean termination and file handles are closed on exit.

AuthLogWatcher class

Concrete implementation monitoring SSH authentication logs with geographic enrichment.

Class attributes

| Attribute | Type | Description |

|---|---|---|

AUTH_LOG |

str | /var/log/auth.log — default input log path |

OUT_AUTH_LOG |

str | /var/log/suspicious_login.log — default output log path |

CITY_DB |

str | /usr/share/GeoIP/GeoLite2-City.mmdb |

ASN_DB |

str | /usr/share/GeoIP/GeoLite2-ASN.mmdb |

WIN_90D_SEC |

int | 7,776,000 — ASN history window in seconds (90 days) |

DT_EPS_H |

float | 1e-9 — minimum time delta in hours to prevent division by zero in velocity calculation |

RX_HEAD |

re.Pattern | Compiled regex matching syslog and ISO 8601 log line headers |

RX_ACCEPT |

re.Pattern | Compiled regex matching SSH Accepted authentication lines |

RX_FAILED |

re.Pattern | Compiled regex matching SSH Failed authentication lines |

Instance attributes

| Attribute | Type | Description |

|---|---|---|

city_reader |

maxminddb.Reader | MaxMind GeoLite2-City database reader |

asn_reader |

maxminddb.Reader | MaxMind GeoLite2-ASN database reader |

users |

Dict[str, UserState] | Per-user state tracking (defaultdict) |

Constructor

__init__(radar_logger: RadarLogger, in_path: str = None, out_path: str = None)

in_path and out_path default to AUTH_LOG and OUT_AUTH_LOG class attributes respectively if not provided. Raises SystemExit with a fatal message if either MaxMind database file is missing.

Methods

| Method | Parameters | Returns | Description |

|---|---|---|---|

drop_old() |

us: UserState, now_sec: int | void | Remove ASN history entries older than 90 days and prune asn_set accordingly |

geo_lookup() |

ip: str | Tuple | Query MaxMind DBs for (country, region, city, lat, lon, asn) |

haversine_km() (static) |

lat1, lon1, lat2, lon2 | float | Great-circle distance in km between two coordinates |

classify_outcome() (static) |

msg_part: str | str | Return "success", "failure", or "info" based on message content |

handle_line() |

line: str | void | Parse SSH log, enrich with geo data, write to output |

Parsing logic

Extraction workflow:

- Match log line header with

RX_HEADto extract timestamp, hostname, program (sshd/sudo), and message content - Parse the timestamp field using

parse_event_ts()to obtain the event epoch time - Search message part for authentication patterns using

RX_ACCEPTorRX_FAILED - Extract username, source IP address, and port number

- Classify outcome as

"success","failure", or"info"based on message content

Enrichment calculations

The AuthLogWatcher enriches authentication events with three behavioral indicators:

Geographic velocity (km/h):

$$v_{geo} = \frac{d_{haversine}(\phi_1, \lambda_1, \phi_2, \lambda_2)}{\max(|\Delta t_{hours}|,\; \varepsilon)}$$

where:

- $d_{haversine}$ is the great-circle distance between the previous and current login coordinates

- $\Delta t_{hours}$ is the signed time difference (event timestamps) between logins in hours

- $\varepsilon = 10^{-9}$ hours (DT_EPS_H) guards against division by zero when events are near-simultaneous

Note: Unlike the previous version, velocity is no longer capped at a fixed maximum. The

DT_EPS_Hguard replaces themax(..., 1e-6)approach and theVEL_CAP_KMHconstant has been removed. Time deltas now useparse_event_ts()on the log's recorded timestamp rather thantime.time(), yielding more accurate velocity estimates for replayed or delayed logs.

Country change indicator:

$$I_{country} = \begin{cases} 1 & \text{if } country_{current} \neq country_{previous} \ 0 & \text{otherwise} \end{cases}$$

ASN novelty indicator:

$$I_{asn} = \begin{cases} 1 & \text{if } ASN_{current} \notin ASN_{history} \ 0 & \text{otherwise} \end{cases}$$

where $ASN_{history}$ contains unique ASNs from the last 90 days.

Output format

Enriched log line appended with RADAR fields:

<original_log_line> RADAR outcome='success' asn='15169' asn_placeholder_flag='false'

country='US' region='California' city='San Francisco' geo_velocity_kmh='450.230'

country_change_i='1' asn_novelty_i='0'

ApacheLogWatcher class

Concrete implementation monitoring Apache HTTP access logs with geographic enrichment. Filters private IP addresses and supports multiple Apache log sources.

Class attributes

| Attribute | Type | Description |

|---|---|---|

APACHE_LOGS |

List[str] | Default Apache log paths: /var/log/apache2/access.log, /var/log/apache2/other_vhosts_access.log |

OUT_APACHE_LOG |

str | /var/log/suspicious_login.log — default output log path |

CITY_DB |

str | /usr/share/GeoIP/GeoLite2-City.mmdb |

RX_RSYSLOG |

re.Pattern | Regex matching rsyslog-formatted lines: <weekday> <date> <time> <host> nginx\|apache: <rest> |

RX_DOMAIN_IP |

re.Pattern | Regex for domain-based Apache log format: domain.tld <srcip> ... |

RX_HOST_PORT_IP |

re.Pattern | Regex for host:port format: host:port <srcip> ... |

RX_TWO_IPS |

re.Pattern | Regex for two-IP format: <something> <srcip> ... |

RX_SINGLE_IP |

re.Pattern | Regex for single IP format: <srcip> ... |

RX_IPV6_MAPPED |

re.Pattern | Regex for IPv6-mapped IPv4: ::ffff:<srcip> ... |

PRIVATE_PREFIXES |

Tuple[str, ...] | Private IP address ranges to filter (RFC 1918 and loopback) |

Instance attributes

| Attribute | Type | Description |

|---|---|---|

city_reader |

maxminddb.Reader | MaxMind GeoLite2-City database reader |

Constructor

__init__(radar_logger: RadarLogger, in_path: str = None, out_path: str = None)

If in_path is not provided, APACHE_LOGS list is used (multiple sources). Raises SystemExit with a fatal message if the MaxMind City database file is missing.

Methods

| Method | Parameters | Returns | Description |

|---|---|---|---|

extract_srcip() |

line: str | Tuple[Optional[str], str] | Extract source IP from various Apache log formats; returns (ip, normalized_line) |

geo_lookup() |

ip: str | Tuple | Query MaxMind DB for (country, region, city, lat, lon) |

handle_line() |

line: str | void | Parse Apache log, filter private IPs, enrich with geo data, write to output |

Parsing logic

IP extraction workflow:

- Strip rsyslog prefix if present using

RX_RSYSLOG - Try to extract source IP using a cascade of regex patterns:

- IPv6-mapped IPv4 (

RX_IPV6_MAPPED) - Host:port format (RX_HOST_PORT_IP) - Domain.tld format (RX_DOMAIN_IP) - Two-IP format (RX_TWO_IPS) - Single IP format (RX_SINGLE_IP) - Return the first match or

(None, line)if no pattern matches

Filtering:

- Lines without double-quote (

") orHTTP/string are skipped (not valid Apache combined log format) - Private IP addresses (RFC 1918, loopback, link-local ranges) are filtered and not logged

Output format

Enriched log line appended with RADAR fields:

<original_log_line> RADAR country="US" region="California" city="San Francisco"

lat="37.77" lon="-122.42"

AuditLogWatcher class

Stub implementation for audit log monitoring. Extends BaseLogWatcher.

Class attributes

| Attribute | Type | Description |

|---|---|---|

AUDIT_LOG |

str | /var/log/audit/audit.log — default input log path |

OUT_AUDIT_LOG |

str | /var/log/audit_volume.log — default output log path |

Constructor

__init__(radar_logger: RadarLogger, in_path: str = None, out_path: str = None)

Defaults in_path and out_path to class attributes.

Methods

| Method | Parameters | Returns | Description |

|---|---|---|---|

handle_line() |

line: str | void | No-op stub; audit log processing not yet implemented |

Note:

AuditLogWatcheris defined for future use. The currentmain()function instantiatesAuthLogWatcherand oneApacheLogWatcherper log path inApacheLogWatcher.APACHE_LOGS.

UserState class

Lightweight state container tracking per-user authentication history.

Attributes (slots)

| Attribute | Type | Description |

|---|---|---|

last_ts |

Optional[float] | Timestamp of last login (Unix epoch seconds, from parse_event_ts()) |

last_lat |

Optional[float] | Latitude of last login location |

last_lon |

Optional[float] | Longitude of last login location |

last_country |

Optional[str] | ISO 3166-1 alpha-2 country code of last login |

asn_hist |

deque[Tuple[str, int]] | ASN history with timestamps (90-day window) |

asn_set |

Set[str] | Set of unique ASNs seen in last 90 days |

Usage pattern

UserState instances are automatically created per user using defaultdict(UserState). Each authentication event updates the user's state with:

- Current event timestamp (parsed from log line), latitude, longitude, and country code

- New ASN appended to history with the event timestamp as integer seconds

- ASN added to the unique ASN set

History pruning

ASN history entries older than 90 days (WIN_90D_SEC) are pruned to keep memory bounded. When removing old entries, the ASN is also removed from asn_set if no other entries in the remaining history reference it.

Helper functions

haversine_km()

Now a static method of AuthLogWatcher (previously a module-level function). Calculates the great-circle distance between two geographic coordinates using the haversine formula:

$$d = 2R \arcsin\left(\sqrt{\sin^2\left(\frac{\phi_2 - \phi_1}{2}\right) + \cos(\phi_1) \cos(\phi_2) \sin^2\left(\frac{\lambda_2 - \lambda_1}{2}\right)}\right)$$

where: - $R = 6371.0088$ km (Earth's mean radius) - $\phi_1, \phi_2$ are latitudes in radians - $\lambda_1, \lambda_2$ are longitudes in radians

Returns: Distance in kilometers

Sequence diagram: Authentication event processing

Configuration and deployment

Systemd service

The RADAR Helper runs as a systemd service:

Service file: /etc/systemd/system/radar-helper.service

[Unit]

Description=RADAR Helper - Real-time log enrichment service

After=network.target

[Service]

Type=simple

ExecStart=/opt/radar/radar-helper.py

Restart=on-failure

RestartSec=5s

User=root

[Install]

WantedBy=multi-user.target

File paths

| Constant / Attribute | Path | Description |

|---|---|---|

DEBUG_LOG (module) |

/var/log/radar-helper-debug.log |

Shared debug log written by all watchers |

AuthLogWatcher.AUTH_LOG |

/var/log/auth.log |

Input: SSH authentication log |

AuthLogWatcher.OUT_AUTH_LOG |

/var/log/suspicious_login.log |

Output: enriched authentication log |

AuthLogWatcher.CITY_DB |

/usr/share/GeoIP/GeoLite2-City.mmdb |

MaxMind GeoLite2 City database |

AuthLogWatcher.ASN_DB |

/usr/share/GeoIP/GeoLite2-ASN.mmdb |

MaxMind GeoLite2 ASN database |

ApacheLogWatcher.APACHE_LOGS[0] |

/var/log/apache2/access.log |

Input: Apache standard access log |

ApacheLogWatcher.APACHE_LOGS[1] |

/var/log/apache2/other_vhosts_access.log |

Input: Apache other virtual hosts access log |

ApacheLogWatcher.OUT_APACHE_LOG |

/var/log/suspicious_login.log |

Output: enriched Apache log |

ApacheLogWatcher.CITY_DB |

/usr/share/GeoIP/GeoLite2-City.mmdb |

MaxMind GeoLite2 City database |

AuditLogWatcher.AUDIT_LOG |

/var/log/audit/audit.log |

Input: audit log (stub) |

AuditLogWatcher.OUT_AUDIT_LOG |

/var/log/audit_volume.log |

Output: audit log (stub) |

Constants

| Constant | Location | Value | Description |

|---|---|---|---|

WIN_90D_SEC |

AuthLogWatcher (class) |

7,776,000 |

ASN history window (90 days in seconds) |

DT_EPS_H |

AuthLogWatcher (class) |

1e-9 |

Minimum time delta (hours) for velocity calculation |

Error handling

- Missing MaxMind databases: Fatal error with

SystemExit - File not found during rotation: Temporary; retried after 0.2 s

- GeoIP lookup failures: Logged; fields left empty

- Parse failures: Logged; line skipped

- Thread exceptions: Logged with full traceback; thread continues

- ISO 8601 / syslog timestamp parse failures:

parse_event_ts()falls back totime.time()

Main entry point

The main() function orchestrates watcher thread initialization and lifecycle management:

def main():

auth_watcher = AuthLogWatcher(RADAR_LOG)

apache_watchers = [

ApacheLogWatcher(RADAR_LOG, in_path=p)

for p in ApacheLogWatcher.APACHE_LOGS

]

auth_watcher.start()

for w in apache_watchers:

w.start()

try:

while True:

time.sleep(1.0)

except KeyboardInterrupt:

pass

finally:

auth_watcher.stop()

for w in apache_watchers:

w.stop()

auth_watcher.join(timeout=5.0)

for w in apache_watchers:

w.join(timeout=5.0)

Initialization

- Create one

AuthLogWatcherinstance - Create one

ApacheLogWatcherinstance per log path inApacheLogWatcher.APACHE_LOGS - Start all watcher threads in daemon mode

Shutdown sequence

- Catch

KeyboardInterrupt(Ctrl+C) or other termination signal - Call

stop()on all watcher threads to set the stop event - Join all threads with a 5-second timeout per thread

- Exit cleanly

Threading model

main()creates anAuthLogWatcherinstance and multipleApacheLogWatcherinstances (one per Apache log), passing the sharedRADAR_LOGsingleton- Each watcher runs in a separate daemon thread

- Threads monitor logs independently with tail-F semantics

- Graceful shutdown via

stop()and Event signaling - Threads joined with 5-second timeout on exit

Implementation

See radar/radar-helper/radar-helper.py for complete implementation.

Parent links: LARC-025 RADAR helper enrichment pipeline

2.10 RADAR simulation framework software design SWD-039

This document specifies the design of the RADAR scenario simulation framework, covering component structure, dispatch logic, the Ansible playbook, the shared utility library, and all scenario-specific simulation scripts.

Purpose

The simulation generates agent-realistic artefacts for validating RADAR detection scenarios end-to-end. It operates exclusively at the agent level, writing to auth logs or the filesystem, so that all Wazuh decoder, rule, and active response stages are exercised under conditions identical to production.

Module inventory

| Module | Type | Responsibility |

|---|---|---|

simulate-radar.sh |

Bash script | Entry point, argument validation, local/remote dispatch |

simulate.yml |

Ansible playbook | Remote agent copy, execution, and cleanup |

common.py |

Python library | Shared utilities: config loading, log writing, timestamp detection |

suspicious_login.py |

Python script | SSH burst failure + success artefact generation |

geoip_detection.py |

Python script | SSH success artefact from non-whitelisted IP |

log_volume.py |

Python script | Exponential filesystem growth simulation |

config.yaml |

YAML configuration | All scenario parameters, no hardcoded values |

Component structure

simulate-radar.sh

│

├── [local] config.yaml ──► container_name resolution

│ │

│ └──► docker exec ──► container

│ └──► /tmp/ratf-simulate/scenarios/

│ ├── common.py

│ ├── config.yaml

│ └── <scenario>.py

│

└── [remote] inventory.yaml ──► Ansible

└──► simulate.yml

└──► SSH agent endpoint

└──► /tmp/ratf-simulate/scenarios/

├── common.py

├── config.yaml

└── <scenario>.py

Sequence diagram

simulate-radar.sh

Responsibilities

- Parse and validate

SCENARIOand--agentarguments - Resolve SSH key for remote mode

- Resolve

container_namefromconfig.yamlfor local mode - Dispatch to

docker exec(local) oransible-playbook(remote)

Argument specification

| Argument | Required | Values | Description |

|---|---|---|---|

SCENARIO |

Yes | suspicious_login, geoip_detection, log_volume |

Scenario to simulate |

--agent |

Yes | local, remote |

Agent execution target |

--ssh-key |

No | Path to SSH private key (default: ~/.ssh/id_ed25519) |

Local dispatch design

1. python3 inline: read config.yaml[SCENARIO].container_name

└── exit 1 if missing or empty

2. docker exec -i CONTAINER sh -lc "mkdir -p /tmp/ratf-simulate/scenarios"

3. docker cp RATF_SCENARIO_DIR/. CONTAINER:/tmp/ratf-simulate/scenarios/

4. docker exec -i CONTAINER sh -lc

"python3 /tmp/ratf-simulate/scenarios/SCENARIO.py"

5. exit with docker exec return code

Remote dispatch design

1. Resolve SSH_KEY path; exit 1 if file not found

2. If SSH_AUTH_SOCK unset or ssh-add -l fails:

eval $(ssh-agent -s); ssh-add SSH_KEY

else: ssh-add SSH_KEY if fingerprint not already loaded

3. ansible-playbook

-i inventory.yaml

--limit wazuh_agents_ssh

--ask-vault-pass

roles/wazuh_agent/playbooks/simulate.yml

-e radar_simulate_scenario=SCENARIO

-e ratf_scenarios_dir=RATF_SCENARIO_DIR

4. exit with ansible-playbook return code

Error conditions

| Condition | Exit code | Message |

|---|---|---|

| Missing SCENARIO | 1 | Missing scenario argument |

| Invalid SCENARIO | 1 | Invalid scenario: <value> |

| Missing --agent | 1 | Missing --agent <local\|remote> |

| Invalid --agent | 1 | Invalid --agent value: <value> |

| RATF_SCENARIO_DIR missing | 1 | Scenario dir not found: <path> |

| inventory.yaml missing | 1 | Inventory not found: <path> |

| container_name missing | 1 | Missing '<scenario>.container_name' in config.yaml |

| SSH key not found | 1 | SSH key not found at: <path> |

| Playbook missing | 1 | Playbook not found: <path> |

simulate.yml (Ansible playbook)

Responsibilities

- Validate input variables on remote host

- Create working directory

- Copy scenario scripts from controller to agent

- Execute selected scenario script

- Print stdout for audit trail

- Unconditionally clean up working directory

Variable interface

| Variable | Source | Description |

|---|---|---|

radar_simulate_scenario |

-e flag |

Scenario name, validated against allowed list |

ratf_scenarios_dir |

-e flag |

Absolute path to scenarios dir on controller |

remote_workdir |

Playbook default | /tmp/ratf-simulate |

remote_scenarios_dir |

Derived | {{ remote_workdir }}/scenarios |

Task sequence

1. assert:

radar_simulate_scenario in [suspicious_login, geoip_detection, log_volume]

ratf_scenarios_dir is defined

2. file: path={{ remote_scenarios_dir }} state=directory mode=0755

3. copy: src={{ ratf_scenarios_dir }}/ dest={{ remote_scenarios_dir }}/ mode=0755

4. command: python3 {{ remote_scenarios_dir }}/{{ radar_simulate_scenario }}.py

register: sim_run

5. debug: var=sim_run.stdout

6. file: path={{ remote_workdir }} state=absent

when: true (unconditional)

Design notes

become: trueis set at play level; all tasks run with privilege escalation.gather_facts: falsereduces overhead on security-sensitive endpoints.- Cleanup in step 6 is synchronous and removes the entire work tree.

For

suspicious_loginandgeoip_detection, auth log entries written to/var/log/auth.logare outside this tree and are not removed. Forlog_volume, the spike file is written totarget_dir(e.g./var/log) and is also outside this tree; it is handled separately by async Python-scheduled cleanup ifcleanup_minutes > 0.

common.py

Responsibilities

- Load

config.yamlfrom the directory co-located with the script - Detect auth log timestamp format (syslog vs ISO 8601)

- Append lines to auth log via

tee(with optional sudo) - Format ISO timestamps with configurable timezone offset

- Parse

.envfiles - Locate repository root by walking up the directory tree

suspicious_login.py

Design

Parameters loaded from config.yaml:

common.timezone_offset, common.hostname

suspicious_login.log_path, sudo_tee, user, sshd_pid,

fail_port, success_port,

key_fingerprint_fail, key_fingerprint_success,

ip_pool (list), window_seconds

Algorithm:

step ← max(1, window_seconds // (len(ip_pool) + 1))

base ← datetime.now()

Phase 1 – failure burst:

for i, ip in enumerate(ip_pool):

ts ← base + timedelta(seconds = i × step)

fmt ← detect_authlog_timestamp_format(ts, tz, auth_path)

line ← f"{fmt} {host} sshd[{pid}]: Failed password for {user}

from {ip} port {fail_port} ssh2: {key_fail}"

append_line_authlog(line, auth_path, sudo_tee)

Phase 2 – success:

ts ← base + timedelta(seconds = min(window-1, len(ip_pool) × step))

fmt ← detect_authlog_timestamp_format(ts, tz, auth_path)

line ← f"{fmt} {host} sshd[{pid}]: Accepted publickey for {user}

from {ip_pool[0]} port {success_port} ssh2: {key_ok}"

append_line_authlog(line, auth_path, sudo_tee)

Detection targets

- Rules 210012/210013: frequency ≥ 5 failures within 60 s from same source/destination user

- Rules 210020/210021: impossible travel when ip_pool spans multiple countries

Design notes

stepdistributes events evenly acrosswindow_secondsto satisfy thetimeframe="60"frequency="5"constraints in rules 210012/210013.- The default ip_pool contains six geographically diverse addresses (US, Australia, Japan, UAE, Europe), covering the impossible travel detection path simultaneously.

- Auth log entries are not removed by the framework and persist in

/var/log/auth.logafter simulation completes.

geoip_detection.py

Design

Parameters loaded from config.yaml:

common.timezone_offset, common.hostname

geoip_detection.log_path, sudo_tee, user, sshd_pid,

success_port, ip, key_fingerprint_success

Algorithm:

ts ← datetime.now()

fmt ← detect_authlog_timestamp_format(ts, tz, auth_path)

line ← f"{fmt} {host} sshd[{pid}]: Accepted publickey for {user}

from {ip} port {success_port} ssh2: {key_ok}"

append_line_authlog(line, auth_path, sudo_tee)

Detection targets

- Rules 100900/100901:

authentication_successgroup +radar_countrynot in whitelist - Default IP

8.8.8.8resolves to US (Google), outside the Luxembourg/EU Greater Region whitelist

Design notes

- A single log line is sufficient; the RADAR helper enriches it with

radar_countrybefore forwarding to the rule engine. - Auth log entries are not removed by the framework and persist in

/var/log/auth.logafter simulation completes.

log_volume.py

Design

Parameters loaded from config.yaml:

log_volume.target_dir, spike_filename, steps,

start_bytes, growth_factor, sleep_seconds,

max_total_bytes, max_step_bytes, cleanup_minutes

Helper functions:

_int(v, default) : safe int cast with fallback

_float(v, default) : safe float cast with fallback

_ensure_dir(path) : mkdir -p equivalent

_append_bytes(file_path, bytes_to_add, chunk=1MB):

opens file in append+binary mode, writes in chunk-sized blocks

_schedule_cleanup(file_path, minutes):

Popen(["sh", "-lc",

f"(sleep {s}; rm -f '{fp}') >/dev/null 2>&1 &"])

Main algorithm:

spike_file ← Path(target_dir) / spike_filename

_ensure_dir(base_dir)

spike_file.touch() if not exists

total_written ← 0

for i in range(steps):

desired_add ← int(start_bytes × (growth_factor ^ i))

add_bytes ← min(desired_add, max_step_bytes)

add_bytes ← min(add_bytes, max_total_bytes - total_written)

if add_bytes ≤ 0: break

_append_bytes(spike_file, add_bytes)

total_written += add_bytes

time.sleep(sleep_seconds)

if cleanup_minutes > 0:

_schedule_cleanup(spike_file, cleanup_minutes)

Detection target

- Log volume metric collector measures

target_dirsize - Growth triggers OpenSearch AD anomaly detector

- Rule 100309 (LogVolume-Growth-Detected) fires via monitor → webhook

Safety constraints

| Parameter | Default | Purpose |

|---|---|---|

max_total_bytes |

9 GB | Prevents runaway total disk consumption |

max_step_bytes |

1.5 GB | Limits single-step growth burst |

cleanup_minutes |

5 | Schedules async spike file removal |

Design notes

- Cleanup is asynchronous (background shell) to avoid blocking the simulation script.

- On the remote Ansible path, Ansible's synchronous cleanup removes

/tmp/ratf-simulatebut does NOT remove the spike file, which is written totarget_diroutside the work directory. Ifcleanup_minutesis 0, the spike file must be removed manually.

config.yaml schema

common:

timezone_offset: str # e.g. "+01:00"

hostname: str # agent hostname used in log line host field

suspicious_login:

log_path: str # path to auth.log on agent

sudo_tee: bool # prepend sudo to tee command

user: str # SSH username in log lines

sshd_pid: int # PID used in sshd log entries

fail_port: int # source port for failure lines

success_port: int # source port for success line

key_fingerprint_fail: str # key field in failure lines

key_fingerprint_success: str # key field in success line

ip_pool: list[str] # IPs used for failure burst

window_seconds: int # total event window (default 60)

geoip_detection:

log_path: str

sudo_tee: bool

user: str

sshd_pid: int

success_port: int

ip: str # single non-whitelisted IP

key_fingerprint_success: str

log_volume:

target_dir: str # monitored directory (e.g. /var/log)

spike_filename: str # name of generated spike file

steps: int # number of growth steps

start_bytes: int # bytes added in step 0

growth_factor: float # exponential multiplier per step

sleep_seconds: float # delay between steps

max_total_bytes: int # safety cap on total written

max_step_bytes: int # safety cap per step

cleanup_minutes: int # 0 = no cleanup; >0 = async remove after N min

Parent links: HARC-005 RADAR Automated Test Framework architecture

2.11 RADAR GUI software design SWD-041

This document specifies the software design of the RADAR GUI (radar/gui/), a Flask-based web application providing a browser interface for the full RADAR operational lifecycle. It covers the backend-to-frontend architecture, key design decisions, and the credential/session security model. Frontend component internals (HTML structure, JavaScript event wiring, CSS) are out of scope.

Purpose and scope

The RADAR GUI replaces direct file editing and shell invocation for routine RADAR operations. It wraps build-radar.sh, run-radar.sh, and health-radar.sh with a browser interface, manages ar.yaml and inventory.yaml through structured forms, stores connector credentials in .env, and handles Ansible Vault encryption of sudo passwords through a session-scoped in-memory credential store.

Module structure

gui/

├── app.py Flask application: all routes and startup

├── requirements.txt Runtime dependencies

└── orchestrator/ Backend modules — no Flask dependency

├── ar_config.py Read/write ar.yaml

├── inventory.py Read/write inventory.yaml

├── connectors.py Read/write .env, live connector tests

├── deploy.py Command assembly, subprocess streaming

├── health.py Per-node Ansible health-check execution

└── vault.py Ansible Vault wrappers, SSH passphrase session

The orchestrator modules are deliberately kept free of Flask imports. This separation allows the file-management and subprocess logic to be tested independently and reused by CLI tooling without importing the web framework.

Flask application: app.py

Startup and RADAR_ROOT resolution

app.py resolves the RADAR root directory at import time:

RADAR_ROOT = str(Path(os.environ.get("RADAR_ROOT", Path(__file__).parent.parent)).resolve())

The default places the root at the parent of gui/ (i.e., the radar/ directory). Setting the RADAR_ROOT environment variable before starting the server redirects all file I/O to a different installation, enabling the GUI to manage an alternate RADAR root without code changes.

The server runs with threaded=True so that long-running streaming responses (build output, health check output) do not block subsequent requests on the same worker.

Route organisation

Routes are grouped into two sets: page routes and API routes.

Page routes (/, /active-responses, /infrastructure, /connectors, /deploy) render templates with data fetched from the orchestrator modules. They pass only the data needed for initial render; all subsequent interactions go through the API routes via JavaScript.

API routes (/api/...) are the stable contract between the frontend and the backend.

Orchestrator modules

AR configuration management: ar_config.py

Reads and writes scenarios/active_responses/ar.yaml. The central design decision is default-block deep-merge: ar.yaml contains a scenarios.default block that holds baseline values shared across all scenarios. get_scenario() loads the full file, starts with a deep copy of the default block, and merges the scenario-specific block on top. This means scenario entries in ar.yaml only need to store values that differ from the defaults.

update_scenario() applies a partial patch to the existing scenario entry using the same deep-merge logic, so the GUI can send only the changed fields rather than the full config on every save.

All writes use atomic temp-file replacement (ar.yaml.tmp -> ar.yaml) under a module-level threading.Lock to prevent partial writes if two browser sessions submit simultaneously.

Ansible inventory management: inventory.py

Reads and writes inventory.yaml using the four Ansible host groups: wazuh_manager_local, wazuh_manager_ssh, wazuh_agents_container, wazuh_agents_ssh.

Mode inference: The GUI exposes a simplified ui_kind (docker / host) + is_local checkbox model. _resolve_manager_mode() maps this to the three internal manager_mode values (docker_local, docker_remote, host_remote) that the Ansible roles expect. If ui_kind == "host", the result is always host_remote. If ui_kind == "docker" and is_local is set or no IP is provided, the result is docker_local; otherwise docker_remote.

Group migration on edit: When a node's connection type changes (e.g., local to remote), update_manager() detects the old group, deletes the host from it, and inserts it into the new group — all within a single locked write, preserving all other inventory entries.

Credential co-location: save_credential() delegates to vault.encrypt_host_vars(), which writes the Ansible Vault-encrypted host_vars/<name>.yml file. delete_manager() and delete_agent() call delete_credential() automatically, ensuring no orphaned host_vars/ files remain after a node is removed.

External service credentials: connectors.py

Manages credentials and URLs in .env at the RADAR root. The module defines FIELD_MAP, a 27-entry dict mapping GUI field IDs to environment variable names, and PASSWORD_KEYS, a 5-entry subset mapping the five secret field IDs to their environment variable names:

PASSWORD_KEYS = {

"os-pass": "OS_PASS",

"wazuh-pass": "WAZUH_AUTH_PASS",

"dashboard-pass": "DASHBOARD_PASS",

"smtp-pass": "SMTP_PASS",

"decipher-token": "DECIPHER_TOKEN",

}

The /api/connectors/reveal endpoint checks the incoming request body key against set(PASSWORD_KEYS.values()) — the environment variable names, not the field IDs. The request must therefore send the environment variable name (e.g. "OS_PASS"), and the endpoint refuses any key not in that set of five values.

SSL verification fields (*-ssl-enabled) are handled separately from FIELD_MAP via a dedicated ssl_prefix_map in save_connector(): if the value is "false", the corresponding *_VERIFY_SSL environment variable is set to "false"; if a CA certificate is present in the same request, its content is written to .certs/<name>-ca.pem (chmod 0600) and the path is stored as the environment variable value; otherwise the environment variable is set to "true". Certificate content fields (*-cert-content) are silently skipped in the main field loop since they are consumed by the SSL branch.

All .env writes are performed inside save_connector(), threading.Lock block. _write_env() is called from within that lock and preserves existing comments and unrelated keys by parsing the file line by line, replacing only lines whose key appears in the updated environment dictionary. New keys not previously in the file are appended. The final write uses atomic temp-file replacement (.env.tmp -> .env).

Subprocess streaming: deploy.py

Builds the shell command for build-radar.sh, run-radar.sh, or health-radar.sh from the validated spec dict, then streams the subprocess output to the client via Flask's stream_with_context generator.